Questions

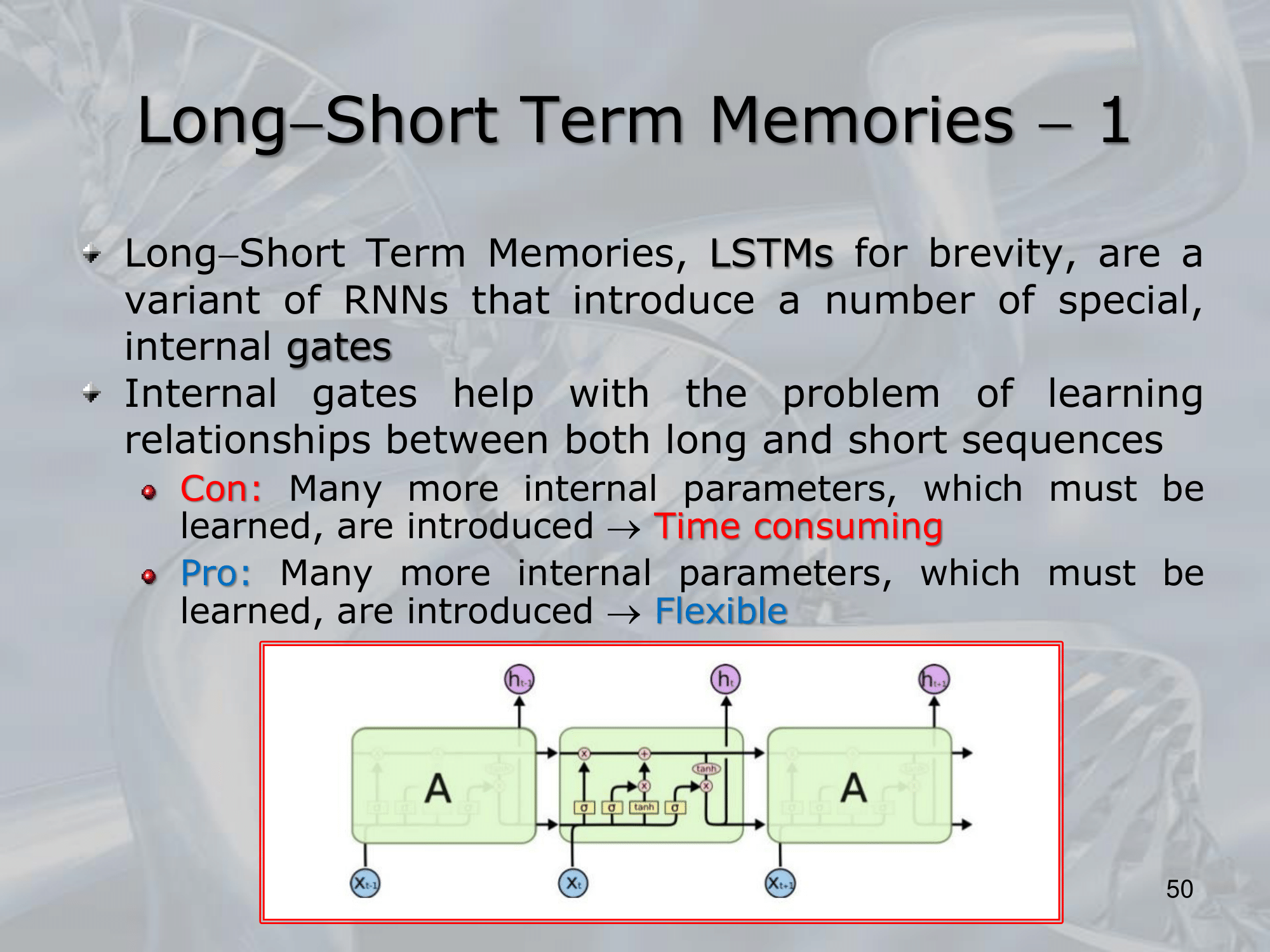

- What are Long-Short Term Memories (LSTMs)?

- Long-Short Term Memories (LSTMs) are a type of recurrent neural network architecture that is designed to address the vanishing/exploding gradient problem and improve the network’s ability to capture long-term dependencies in sequential data.

- At their core, LSTMs are similar to traditional recurrent neural networks in that they use feedback connections to maintain an internal memory of previous inputs.

==However, LSTMs include several additional components, including memory cells and gating mechanisms, that allow the network to selectively store and retrieve information over multiple time steps==. - The key component of an LSTM is the memory cell, which is a self-contained unit that can store information over time.

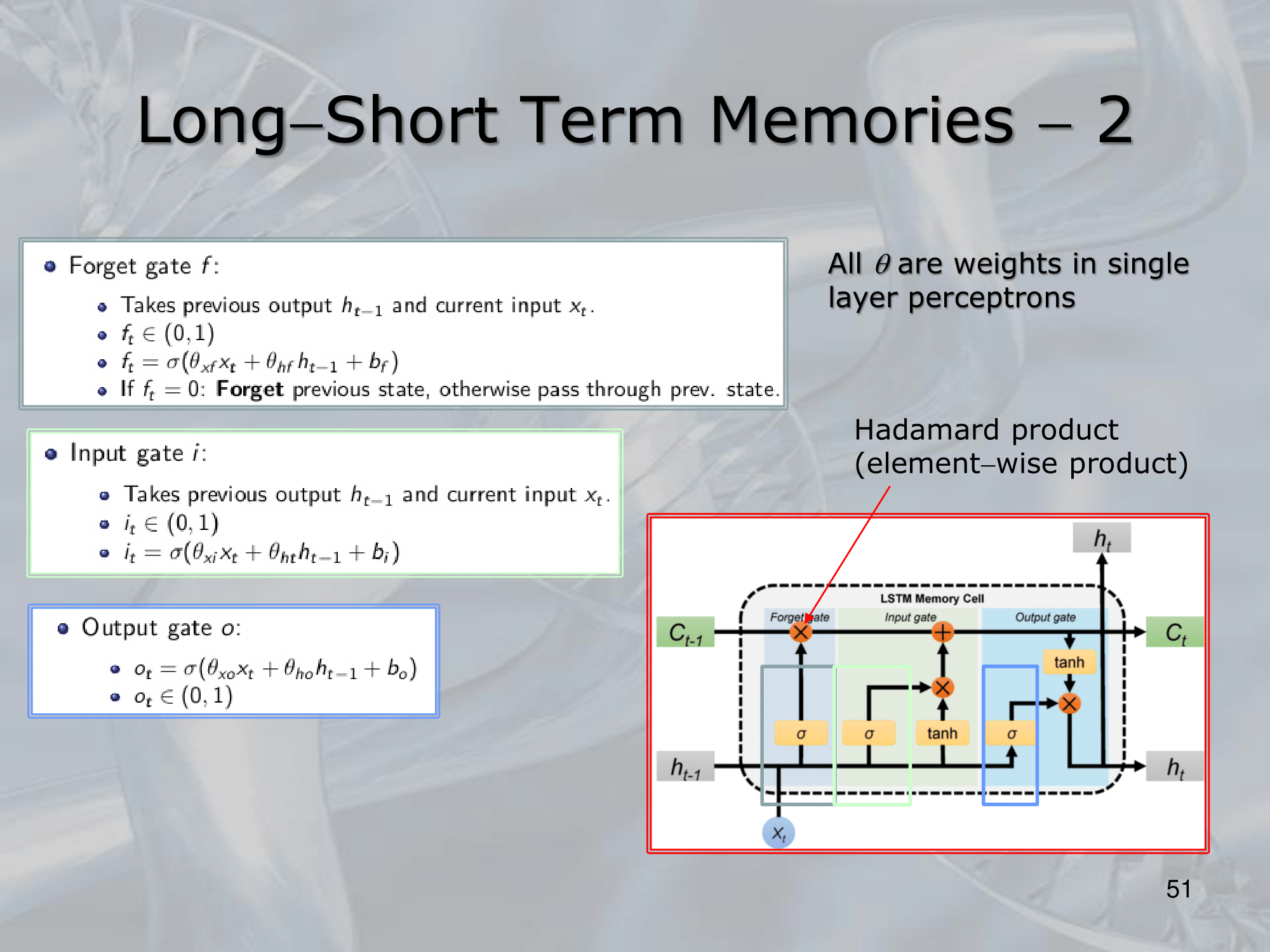

The memory cell is controlled by three gating mechanisms: the input gate, the forget gate, and the output gate.- ==The input gate controls how much new information is added to the memory cell==.

- ==The forget gate controls how much information is retained from the previous memory state==.

- ==Finally, the output gate controls how much information is read out from the memory cell to generate the network’s output==.

- Together, these gating mechanisms allow LSTMs to selectively store and retrieve information over multiple time steps, making them well-suited to tasks that require the network to remember long-term dependencies in sequential data.

- LSTMs have been used successfully in a wide range of applications, including speech recognition, natural language processing, and time series prediction.

Their ability to capture long-term dependencies and avoid the vanishing/exploding gradient problem has made them a popular choice for modeling complex sequential data in deep learning.

—————————————————————

Online Resources

—————————————————————

Slides with Notes

NOTE: The previous hidden state and the input form a vector together that is then passed to 3 sigmoid and 1 tanh, in the GIF it is pretty cleared, but just to avoid confusion we note that and are stacked together.

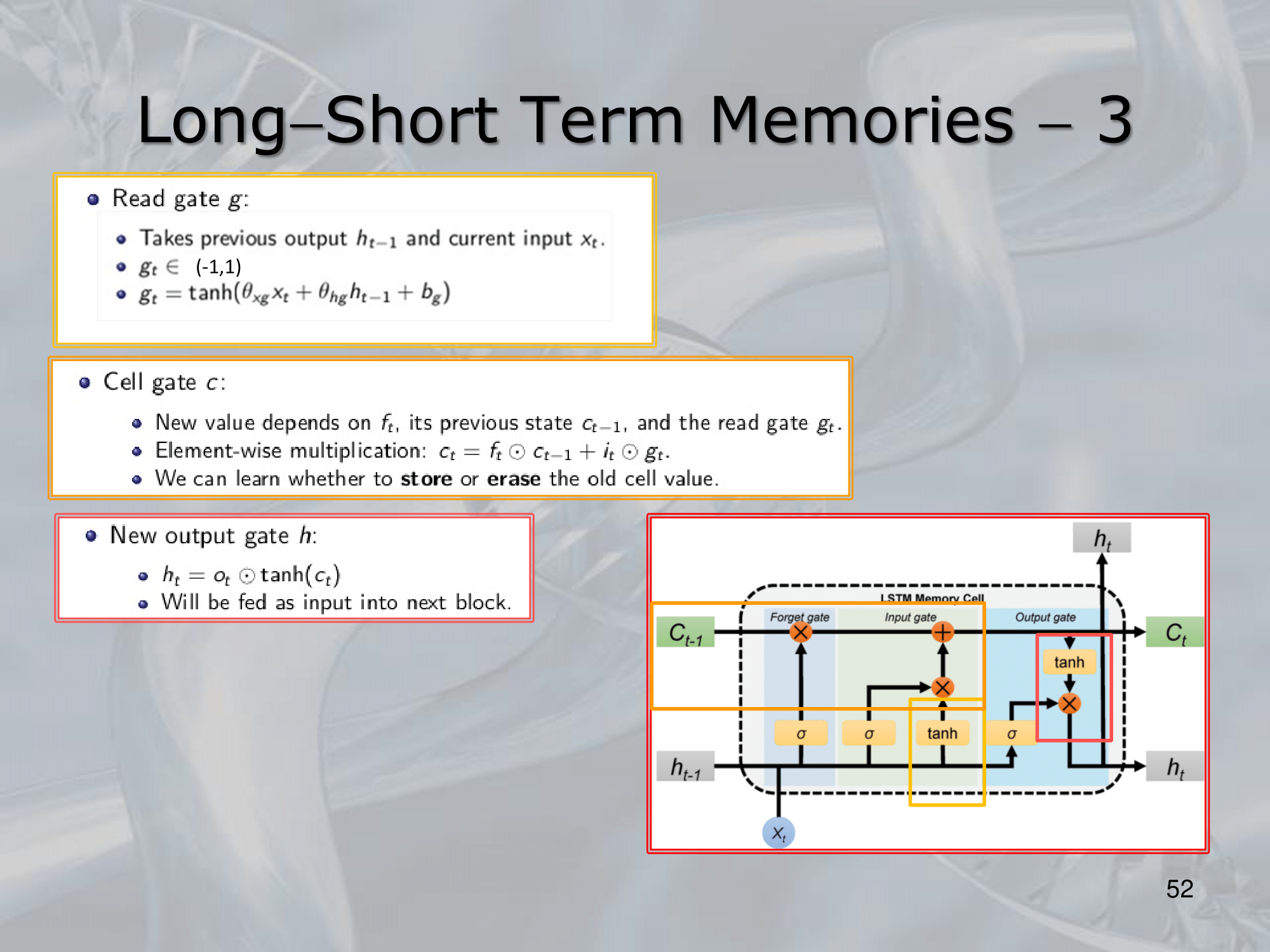

FORMULAS: : complete input vector : forget gate : input gate : candidate gate or read gate These are not the exact formula (in fact this are dependencies: ), at each passage the LSTM cell adds a bias and multiplies the input with a vector of parameters .

Instead these are exact formulas: : new cell state (where is element wise multiplication) : new hidden state