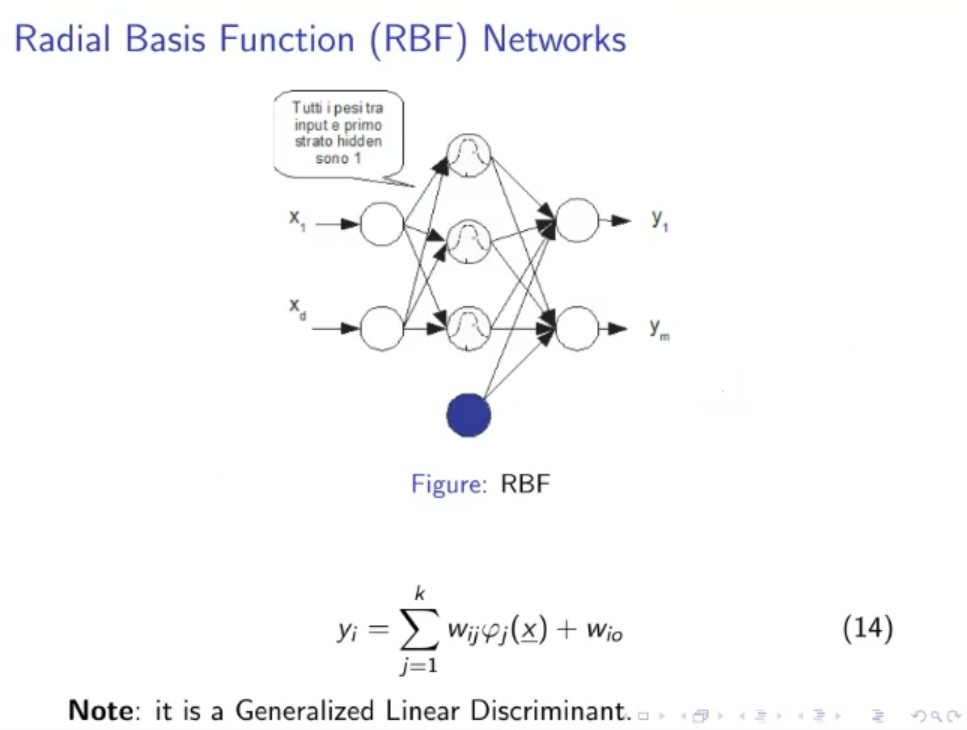

RBF (Radial Basis Function) Networks

- A generalized linear discriminant

- RBF NN are conceptually similar to K-Nearest Neighbour, a predicted value is likely to be about the same as other items that have close values to the predictor variables One difference with the K-NN is that, here we train a Neural Network, in the K-NN however we have to store each training sample, much more space is required in the K-NN method.

- Definition of Radial Basis Function

- RBF Network are 2-layer NN (input layer, 1 hidden layer, output layer)

- All weights between the input layer and the first hidden layer are equal to .

- There could be a bias terms: .

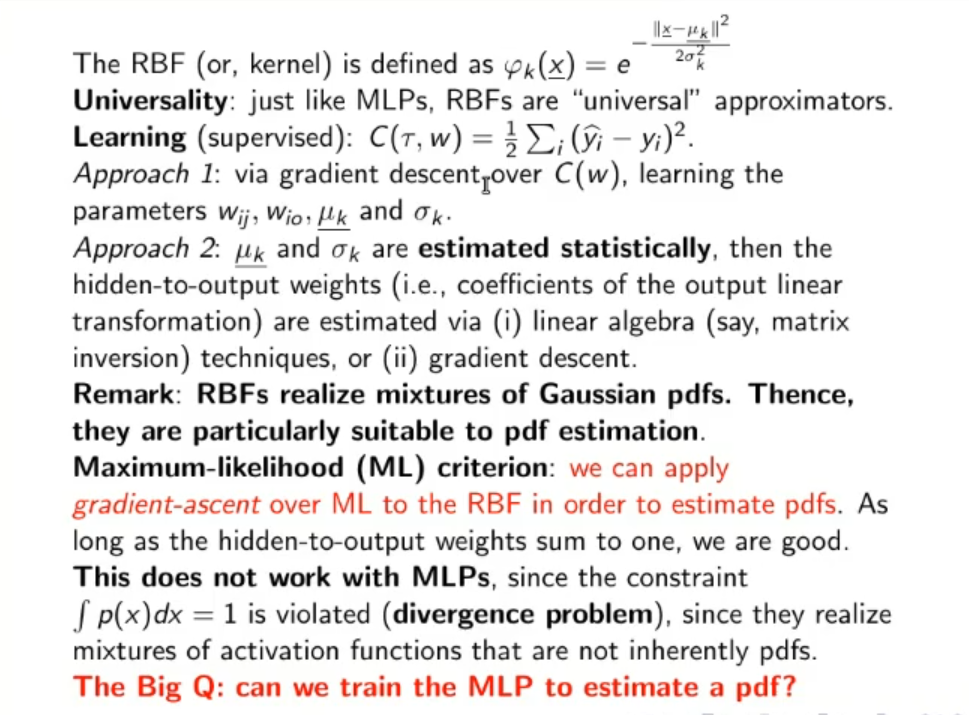

- The RB Function (Radial Basis Function), or kernel is defined as:

- A simple RBF Network with just 1-hidden layer will have this form:

- RB Functions realize a mixture of Gaussian PDFs, hence they are particularly suitable for pdf estimation.

- Like MLPs, RBF Networks are “universal” approximators.

For the learning part, it’s supervised

And we usually consider 2 approaches:

- Via gradient descent over , we learn the parameters: , , and .

- and are estimated statistically, then the other parameters and are estimated via linear algebra methods (such as matrix inversion), or via the precedent method gradient descent.

NOTE: With RBF Networks we can apply gradient-ASCENT over ML (Maximum Likelihood) method in order to estimate PDFs.

The ML method only works if the weights between the last hidden layer and the output layer sum up to .

This can’t be done in MLPs because the constraint is violated, since they realize MLPs realize mixtures of activation functions that are not inherently pdfs.

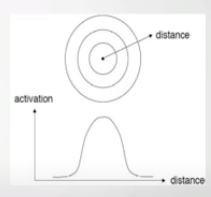

Definition of Radial Basis Function

A RBF is so named because the radius distance is the argument to the function, in our case:

The further a neuron is from the point being evaluated the less influence it has.

Original Video

Original Files