Fast Recap:

- Likelihood

- Likelihood of Conditional Probabilities “Likelihood of “

- ML (Maximum Likelihood) Estimate

- Log-Likelihood

- Gaussian ML Estimate

- Validation of Classifiers

- Normal Method: 60/20/20

- “Leave One Out” Method

- “Many-Fold Crossvalidation” Method

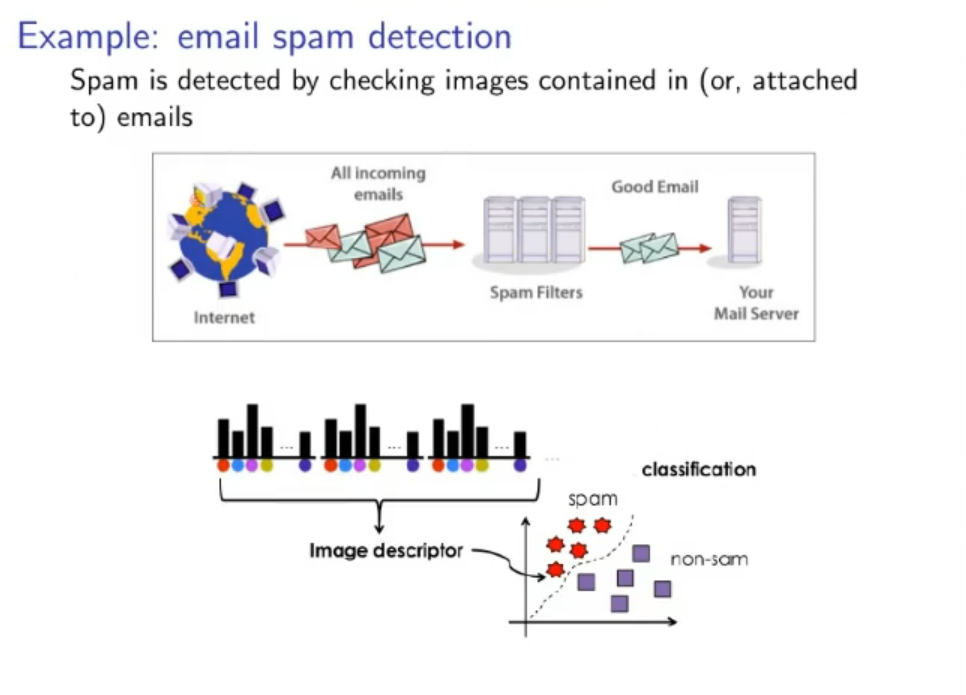

- Supervised Learning

- Non-Parametric Estimate

- Relative Frequency Estimate

- Easily Estimate a PDF

Recap:

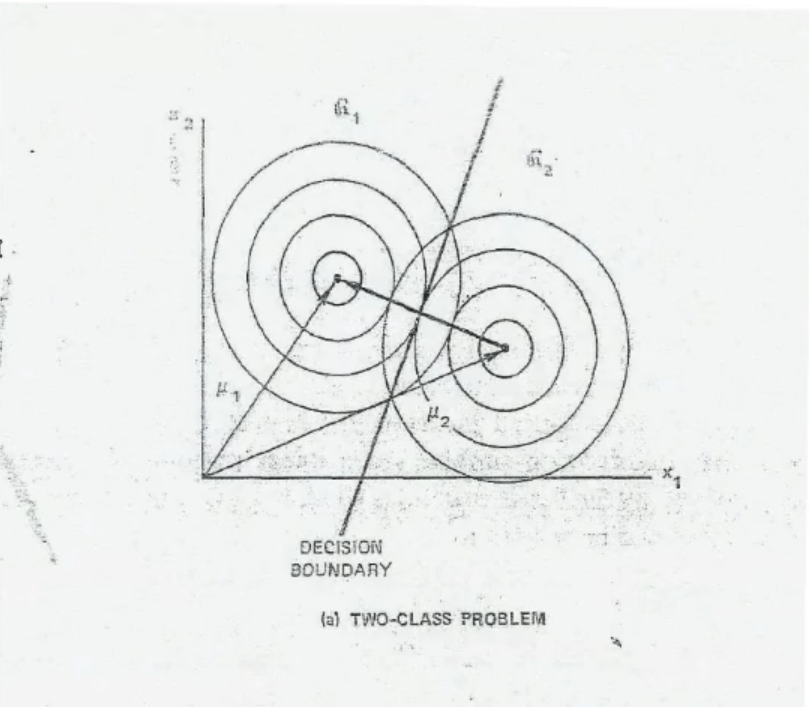

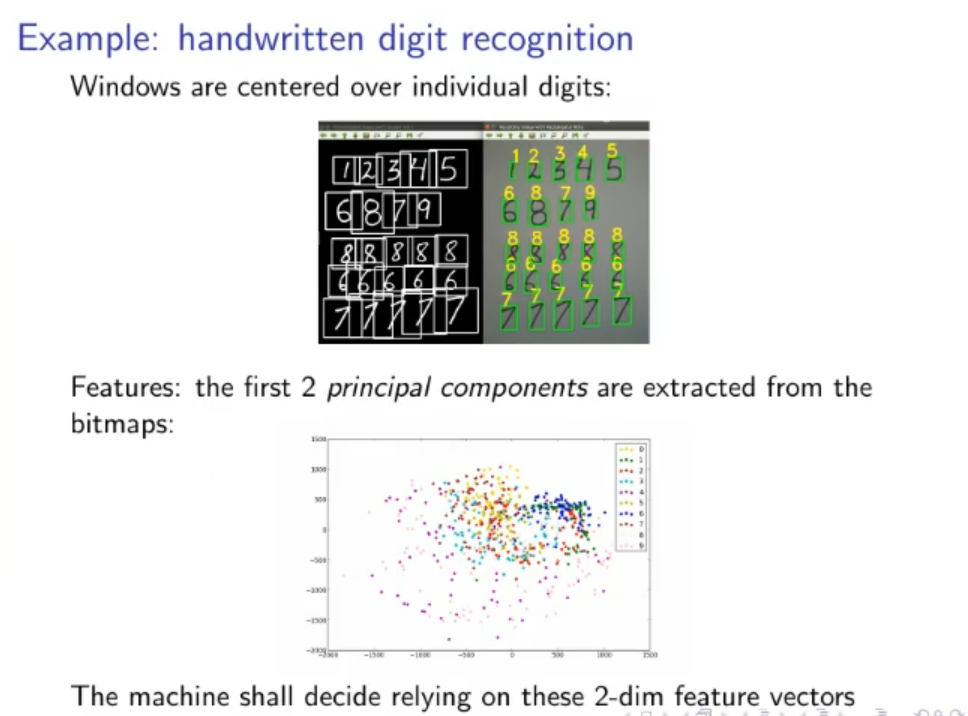

First 2 Principal Components: Given a set of data we can create a Multivariate Gaussian Distribution from it, the first-X principal component are the first-X eigenvalues of the covariance matrix of the distribution.

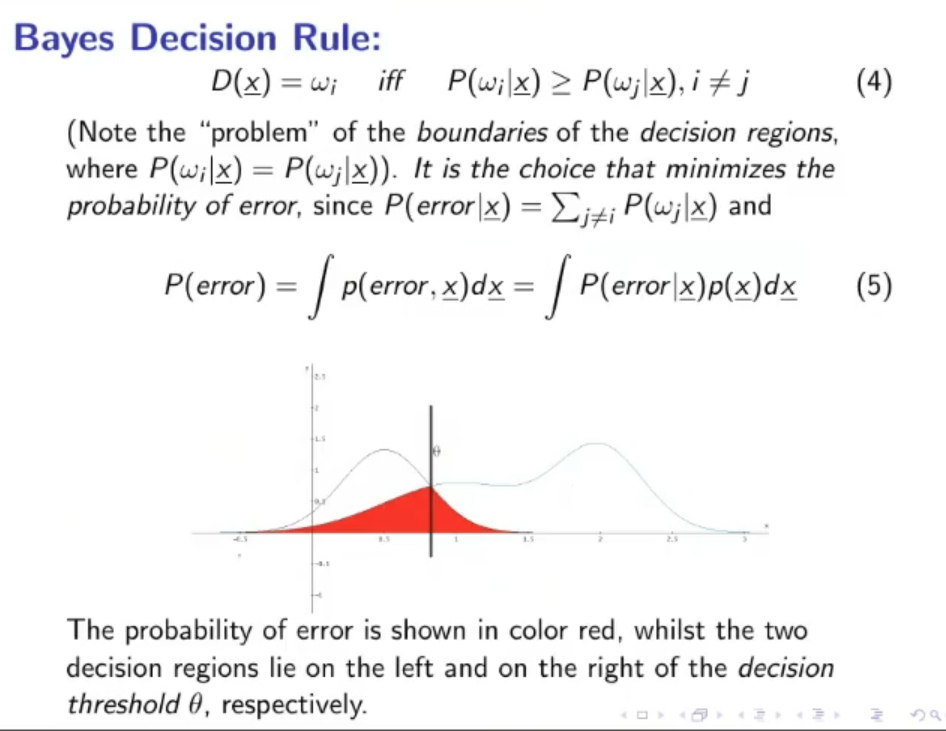

Bayes Decision Rule: Bayes decision rules relies on the maximum of the joint-probability .

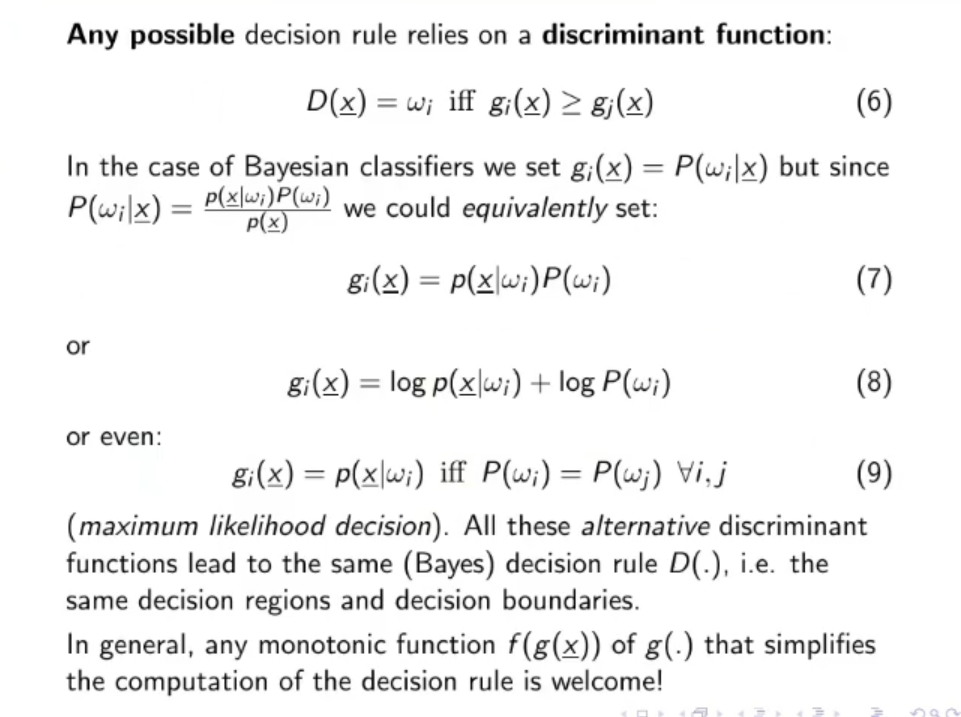

Bayes Decision Rule with Discriminant Functions

We define the Maximum Likelihood Decision as:

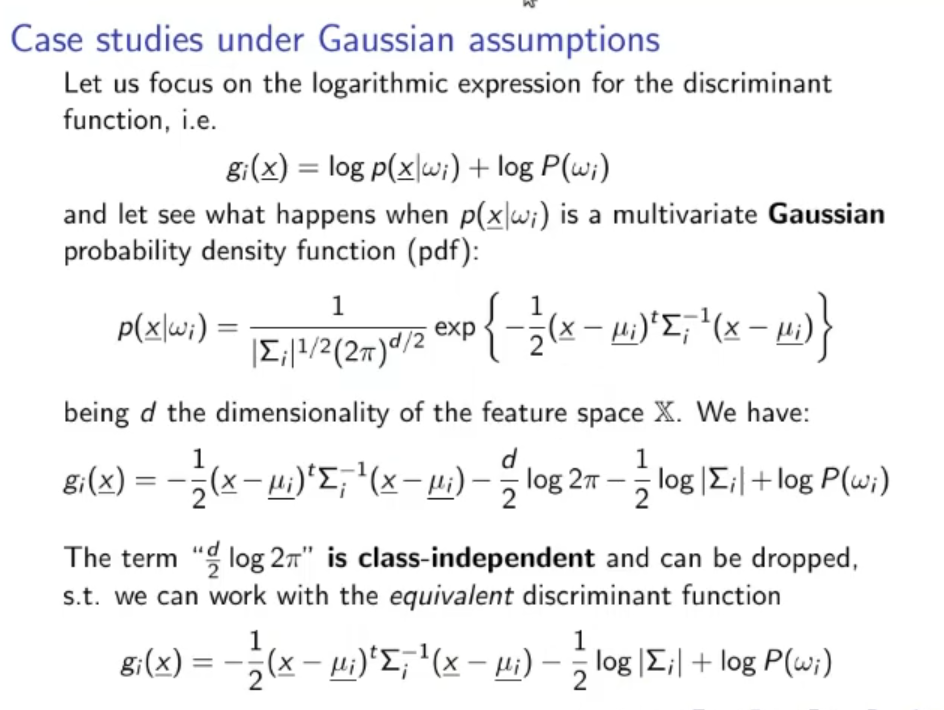

In case of Gaussian assumptions, the MLD will become:

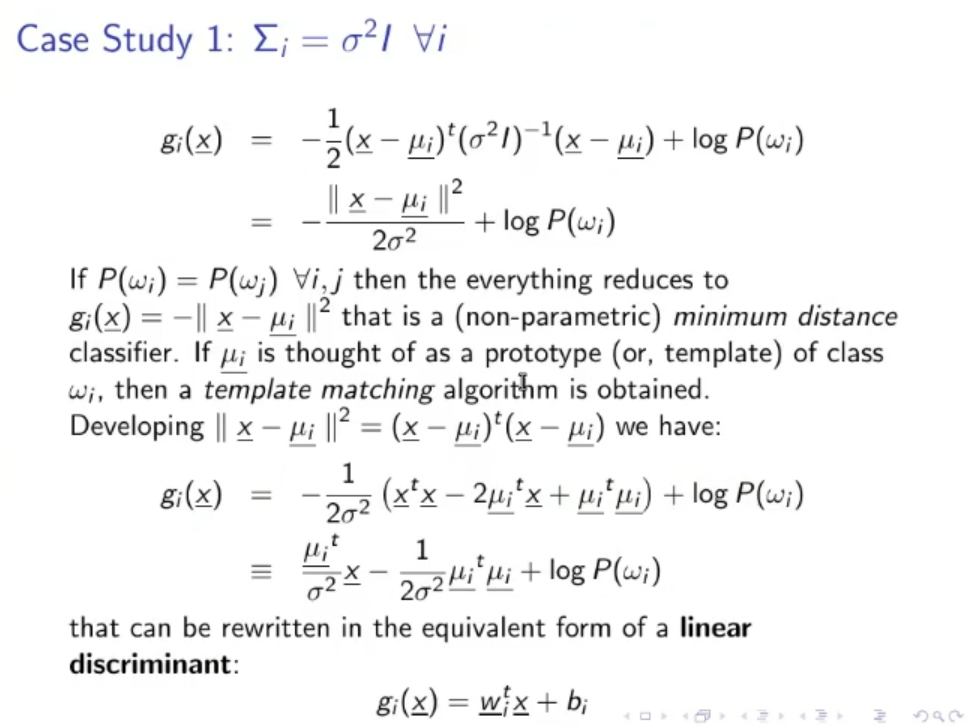

~Ex.: Diagonal Covariance Matrix :

In this particular we have that the MLD (Maximum Likelihood Decision) is linear with respect to .

Naming :

- : classes, for example the gender (male/female) we want to identificate.

- : weights

- : bias

- : data, could mean data in input or training data.

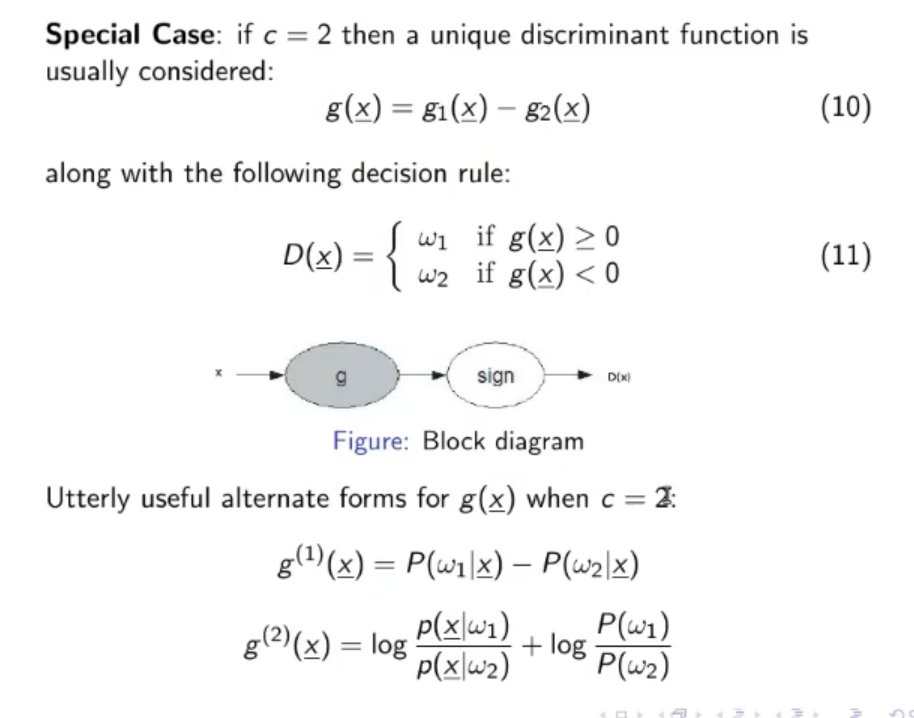

- : decision rule.

- : probability that given the data the corresponding class will be .

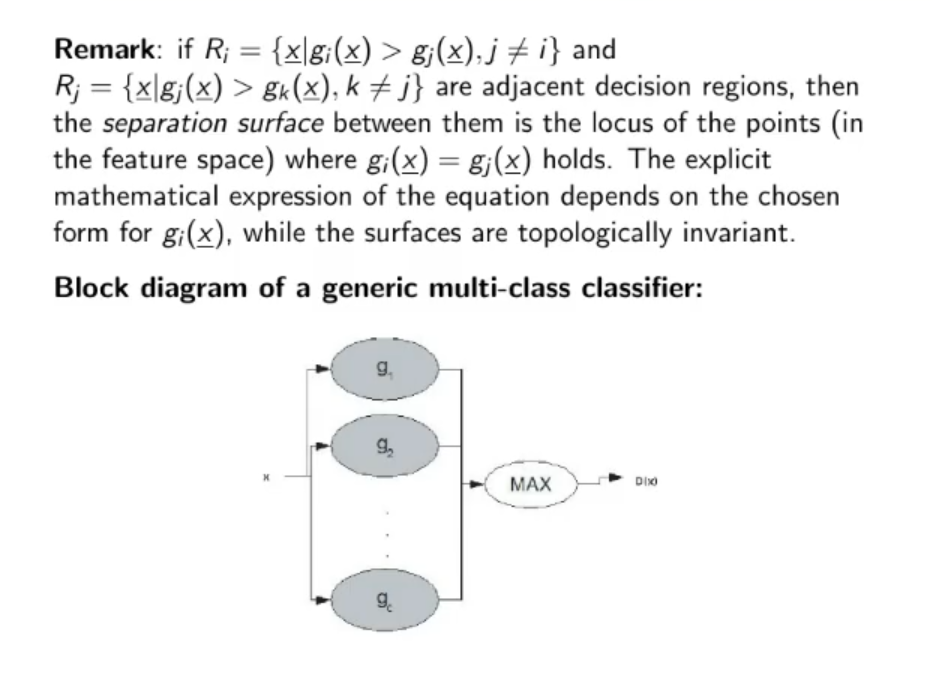

- : discriminant function of class , the Bayes decision rule can be rewritten as:

Original Files:

==First 2 Principal Components== : the first 2 eigen-vectors of the covariance matrix of the Gaussian distribution of the data.