Fast Recap:

Recap:

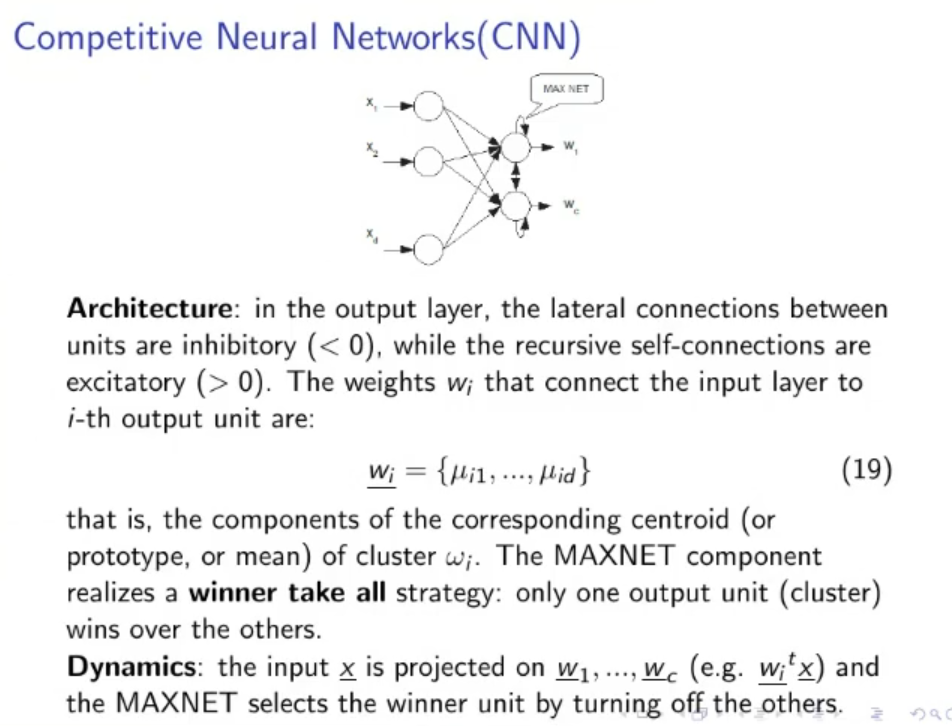

CNN (Competitive Neural Networks): ARCHITECTURE: In the output layer, there are 2 more type of connections:

- Lateral connection (each output neuron is connected to each other output)

- Self-connections (each output neuron has a connection that goes from itself to itself)

The lateral connections are inhibitory (their weights are ) While the self-connections are excitatory (their weights are )

Also at the end of the network there is a MAXNET component that realizes a winner takes all strategy, only one of the output units (a cluster) wins over the others (the losers are set to ).

DYNAMICS: Simple dynamics, the input is passed to the network, the network spits out the outputs ( for ) then the MAXNET selects the winner and turn off all the other outputs.

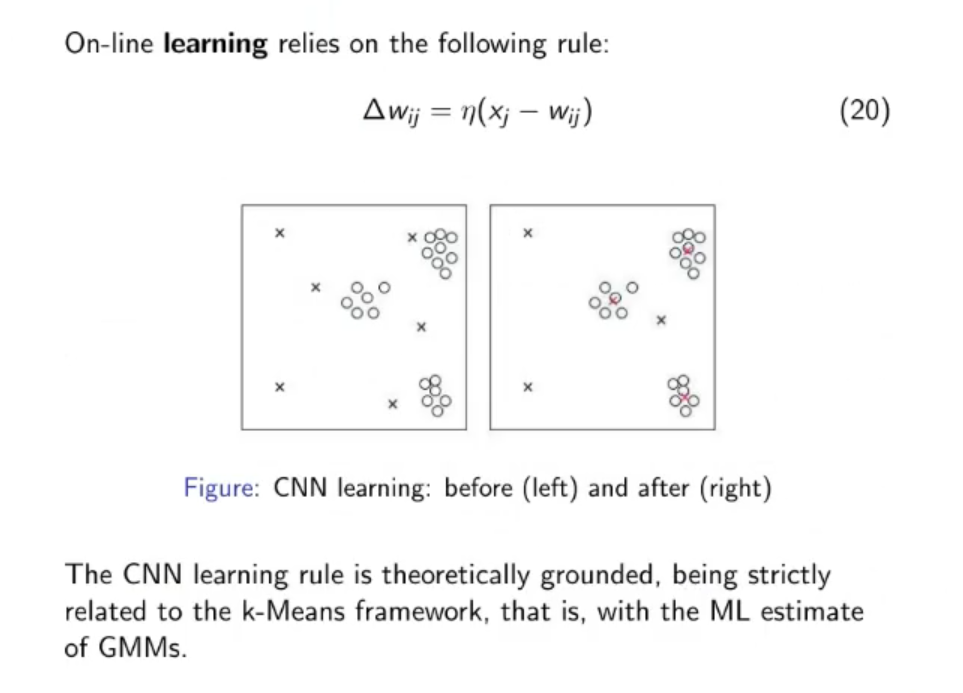

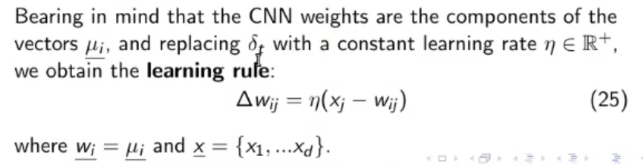

LEARNING: On-line learning that relies on the following rule:

NOTE: This formula is “theoretically grounded”. Ergo “It’s a good learning formula, we don’t need to search for another one”

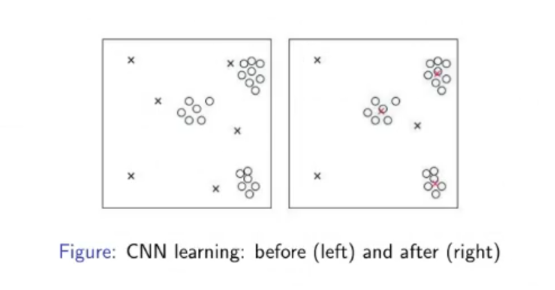

Here we see an example of the learning of a CNN:

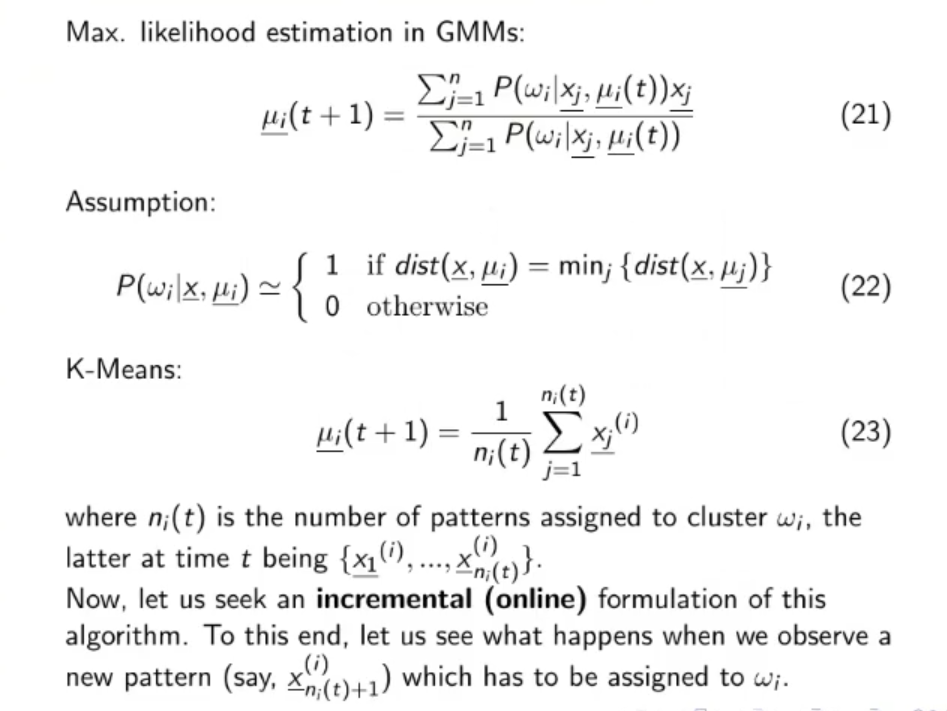

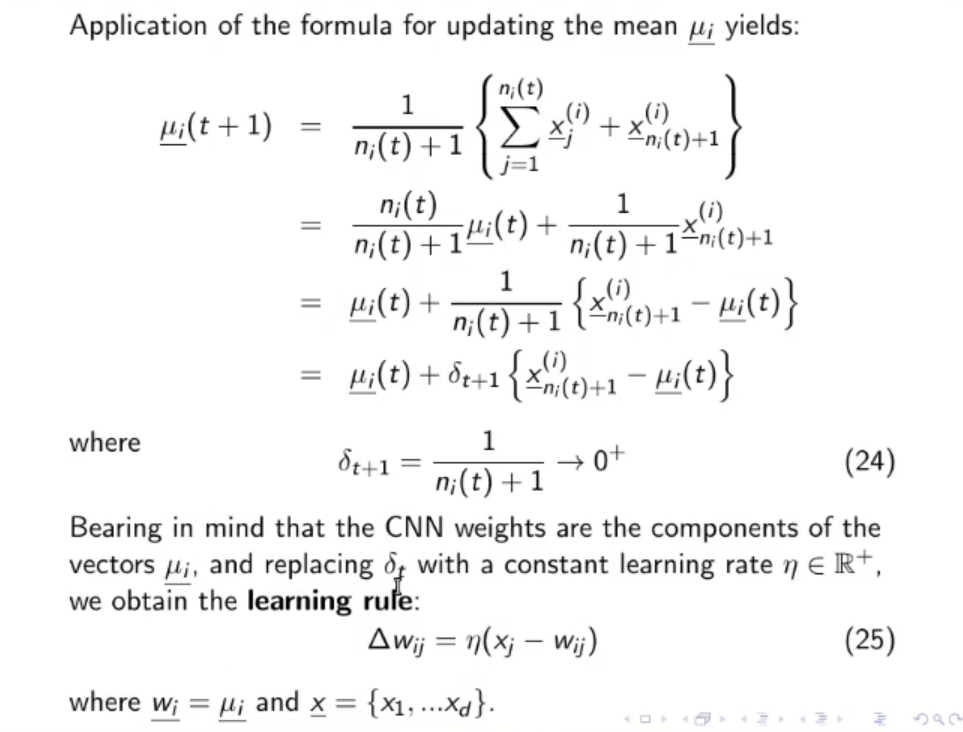

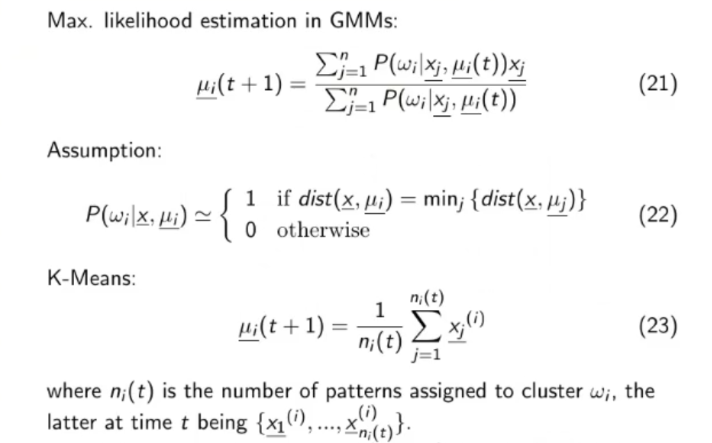

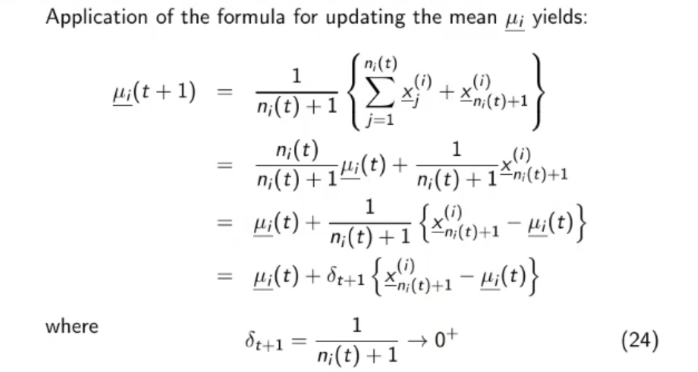

Where does the learning formula of the CNN comes from ?

- We need to define as a function dependent on time, since being a on-line learning algorithm will change possibly at each iteration.

- In our case the vector is the vector of the weights of the network.

- So will become equal to:

- will become equal to:

- We can simplify in the second passage since

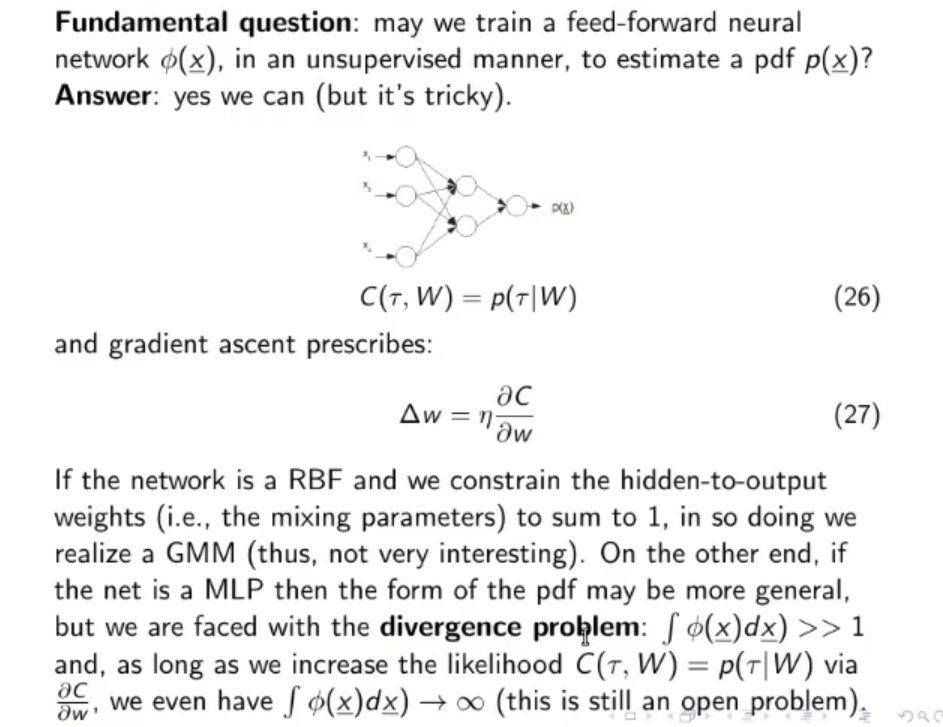

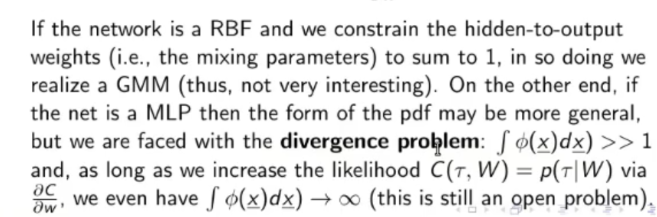

Can we train a feed-forward neural network in an unsupervised manner, to estimate a PDF ? ⇒ Yes, we can, but it’s tricky

We need to make the cost function:

Where is the output of our neural network. This formula means thatTODO

Original Files: