Learning Methods for ANN

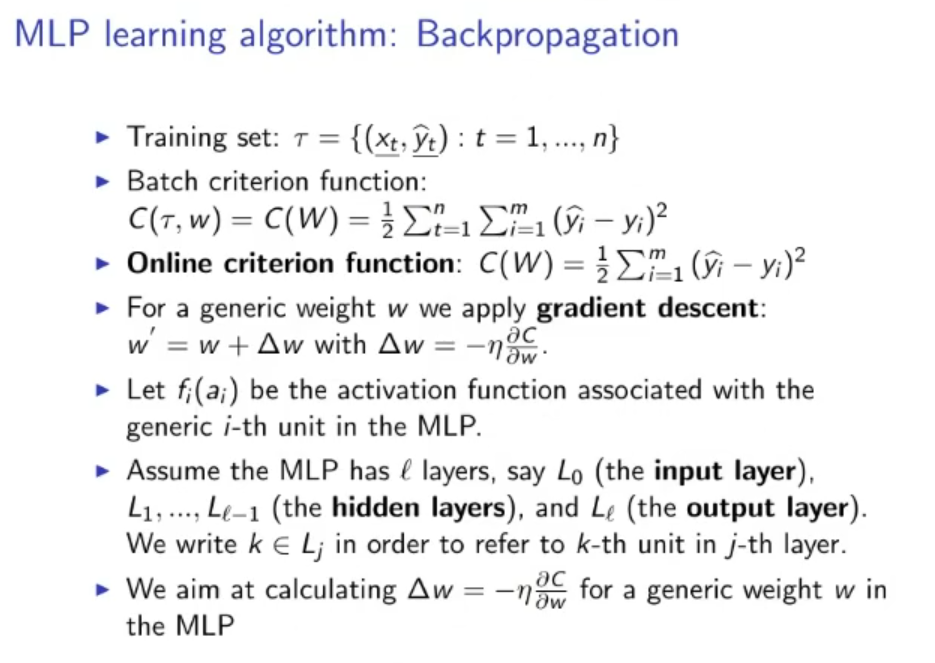

First we need to decide on a cost function, and how many training samples are seen for each epoch:

- We can decide on seeing all training samples before taking a step of backpropagation using the Batch Criterion Function

- Or we can decide to take a step of backpropagation for each training sample, this is called Online Criterion Function

For each epoch we need to apply the Gradient Descent formula to each weight.

- This formula is just a reference, as it can be used just to calculate the of the output layer, the more general formula is the Delta Rule

Batch Criterion Function

Where:

- : is the training set (supervised)

- : weight

- : number of all the data in the training set

- : dimension of the output.

- : predicted output given by the ANN.

Online Criterion Function

The difference with the Batch Criterion is that in the online mode only one output is considered to calculate the Cost , while in the batch for each cost we consider outputs (a batch).

Gradient Descent

Where is calculated as:

Where:

- : weight

- : learning rate () which is decided arbitrarily (can also be non-constant)

- : Cost Function

Original Files