CNN (Competitive Neural Networks)

“A Competitive Neural Network is the equivalent of clustering for the Neural Network family”

The idea behind CNNs is that the output neurons must compete among themselves to become active

CNN are used to classify a set of input patterns

There are two kinds of CNN:

- Competitive Only Neural Network (only ONE output may be active at once)

- Hebbian Neural Network (SEVERAL output may be active at once)

We will see just the Competitive Only Neural Networks.

The competitive learning rule has three main points:

- A set of neurons that are all the same, except for synaptic weight

- Limit imposed on the strength of each neuron

- A mechanism that permits the neuron to compete, implementing a winner takes all strategy To do this we can connect the output neuron to each other and impose that these lateral connections must be inhibitory (their weights are )

CNN Definition:

Please note the similarity with K-Means Clustering

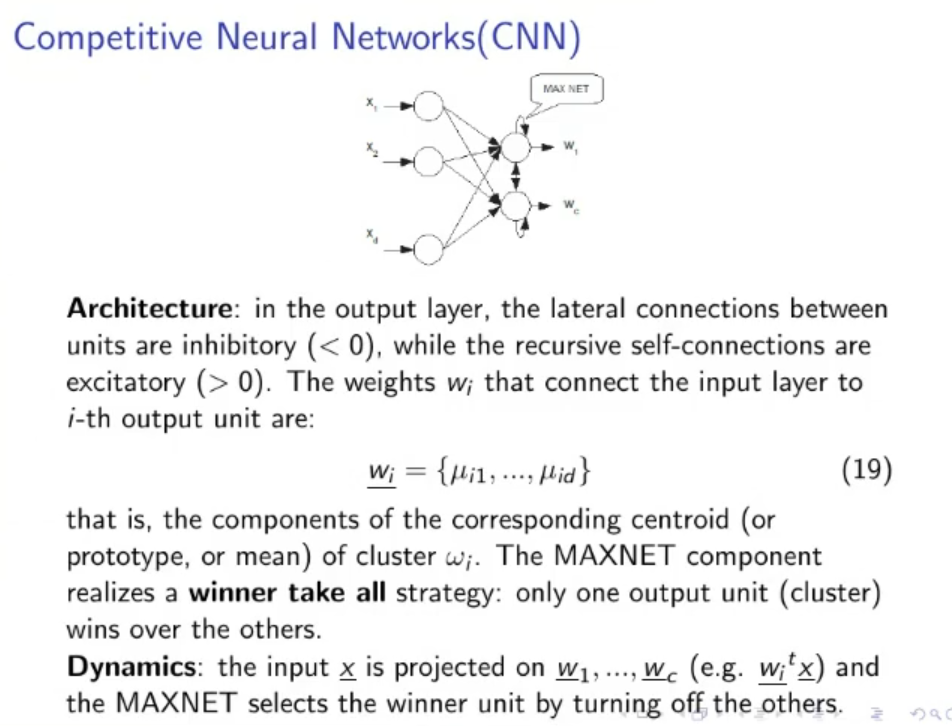

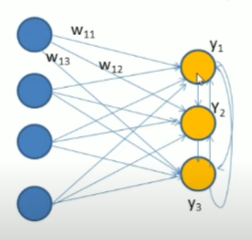

STRUCTURE:

- : inputs where is the dimension of our feature space ()

- : outputs, decided arbitrarily (like K-Means Clustering)

- No hidden layer (1-layer neural network)

- All output neurons must have the same activation function (usually linear)

- The output neurons have all lateral connection such that each output neuron is connected to each other output neuron, these later connections are inhibitory (their weights must be )

- To limit the weights we impose the following rule:

To understand the subscripts please note the following figure:

DYNAMICS:

- Feedforward neural network.

- After are all calculated only the output with the maximum output is set on (MAXNET):

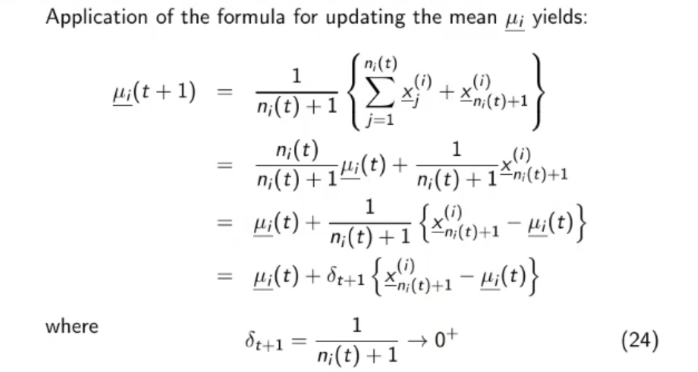

LEARNING: ==ONLY THE WEIGHTS RELATIVE TO THE WINNER OUTPUT ARE CHANGED== Also the learning is ONLINE, each single sample passed to network makes the weight change. Let’s say that is our only output with value (the winner) all the other outputs are inactive (). The change is defined as:

And it’s applied to only the weights with subscript (the ones referring to the winner output), all the others weights remain unchanged

==This Delta makes it that the weight become more similar to the inputs.==

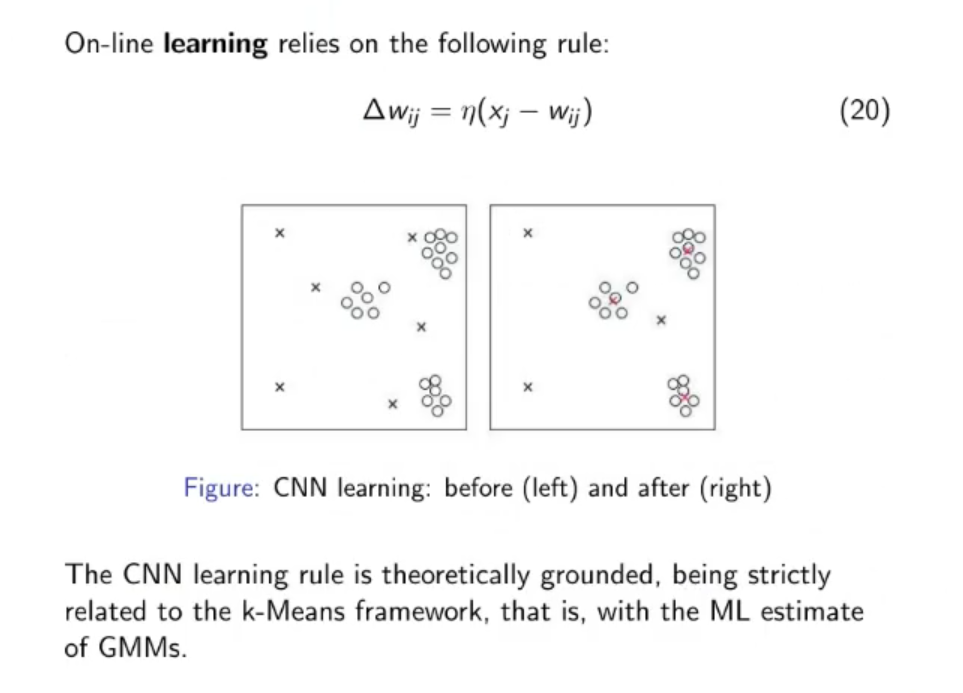

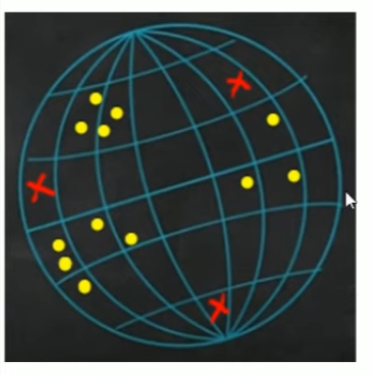

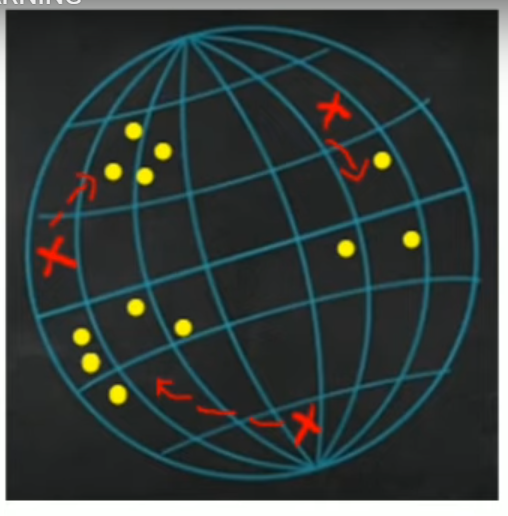

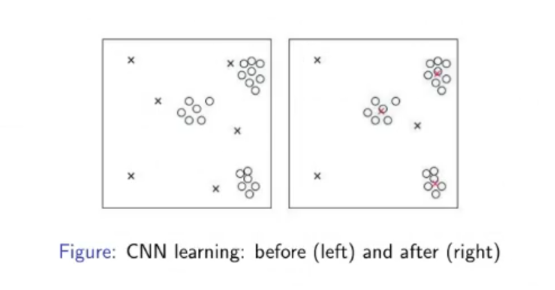

~CNN Example

Assuming our feature vectors are 3D: Starting point:

- Yellow dots : training set

- Red Crosses : Weights of the NN

Iteration over time:

- The red crosses will move toward the center of the cluster

Really similar to K-Mean Clustering

Really similar to K-Mean Clustering

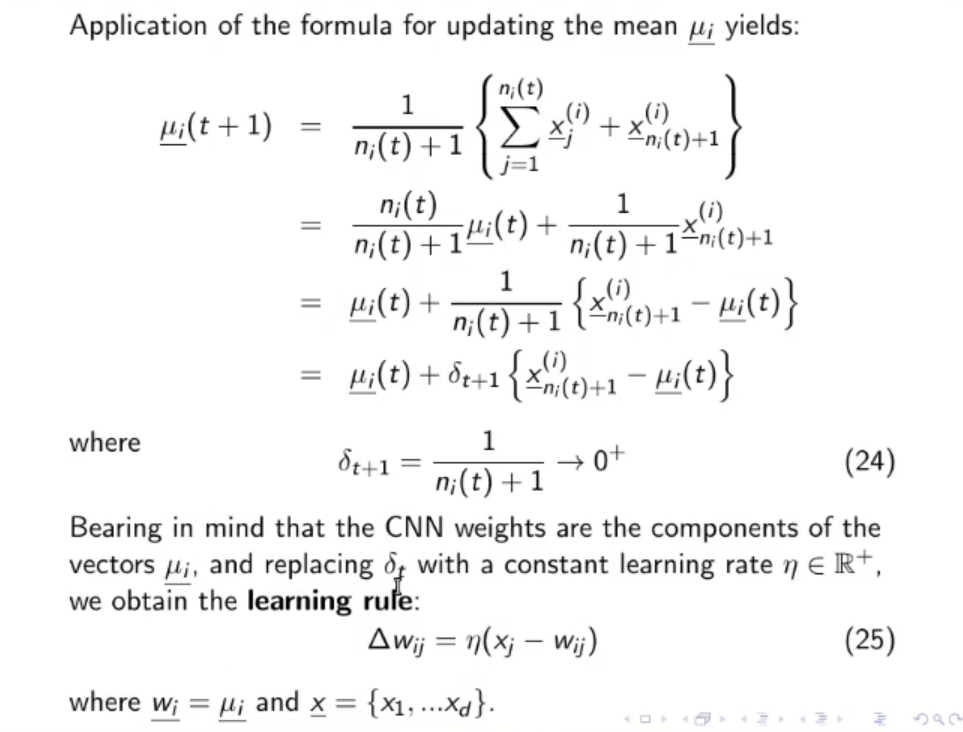

From the Slides:

ARCHITECTURE: In the output layer, there are 2 more type of connections:

- Lateral connection (each output neuron is connected to each other output)

- Self-connections (each output neuron has a connection that goes from itself to itself)

The lateral connections are inhibitory (their weights are ) While the self-connections are excitatory (their weights are )

Also at the end of the network there is a MAXNET component that realizes a winner takes all strategy, only one of the output units (a cluster) wins over the others (the losers are set to ).

DYNAMICS: Simple dynamics, the input is passed to the network, the network spits out the outputs ( for ) then the MAXNET selects the winner and turn off all the other outputs.

LEARNING: On-line learning that relies on the following rule:

NOTE: This formula is “theoretically grounded”. Ergo “It’s a good learning formula, we don’t need to search for another one”

Here we see an example of the learning of a CNN:

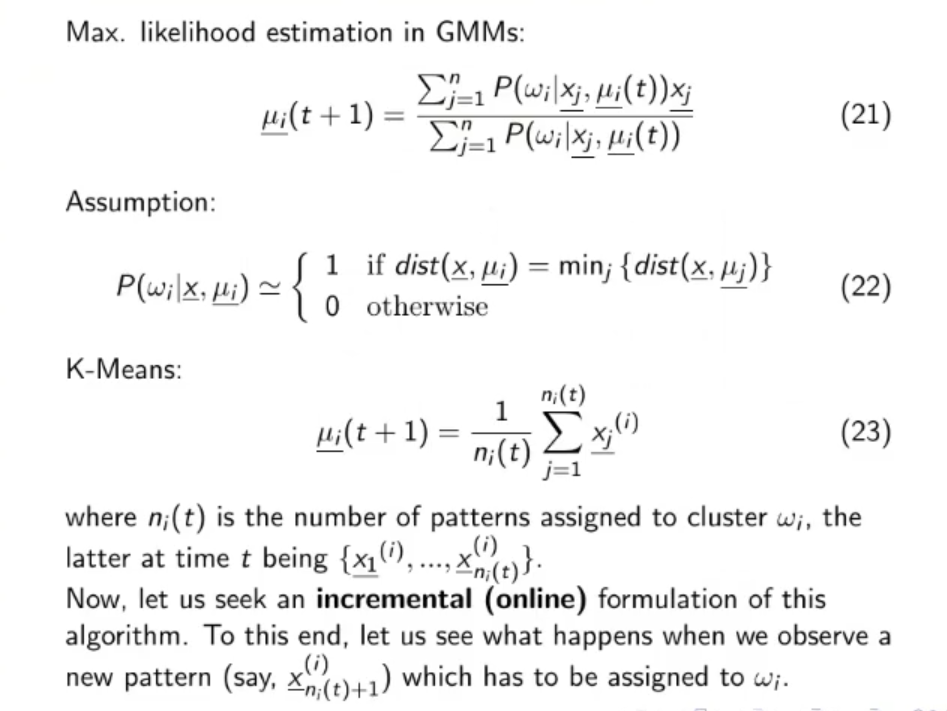

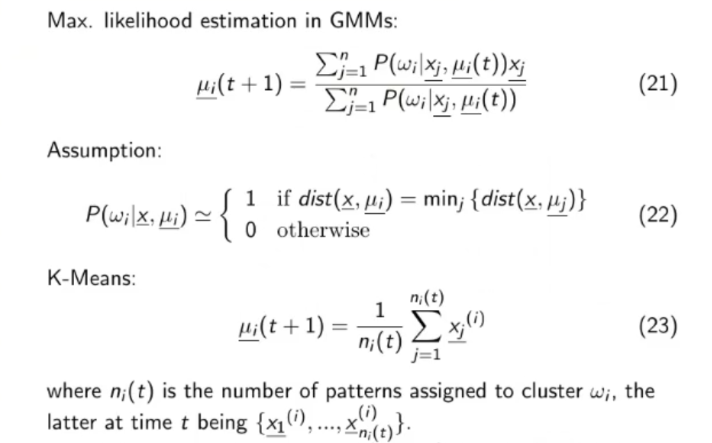

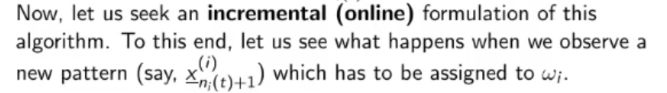

Where does the learning formula of the CNN comes from ?

- We need to define as a function dependent on time, since being a on-line learning algorithm will change possibly at each iteration.

- In our case the vector is the vector of the weights of the network.

- So will become equal to:

- will become equal to:

- We can simplify in the second passage since