Fast Recap:

Recap:

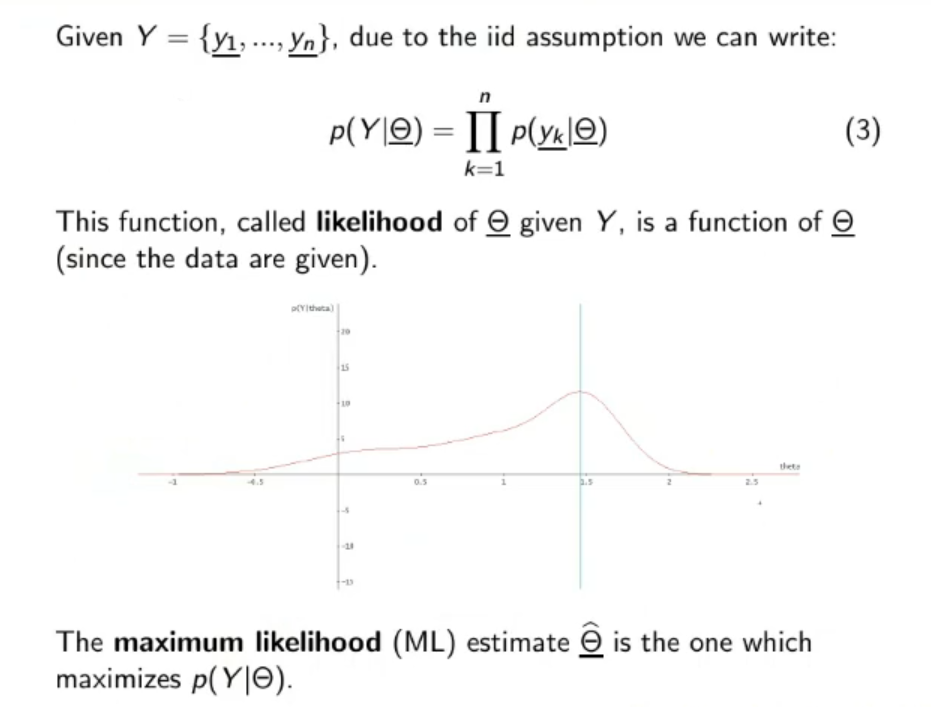

Likelihood : Given a set of data that is given by the distribution , given that they are all given by the same distribution we will say that they are identically distributed, suppose also that they independent* between each other. ⇒ are iid (independent and identically distributed).

Due to the independent assumption we can say that:

this is called the likelihood of given , this is a function of :

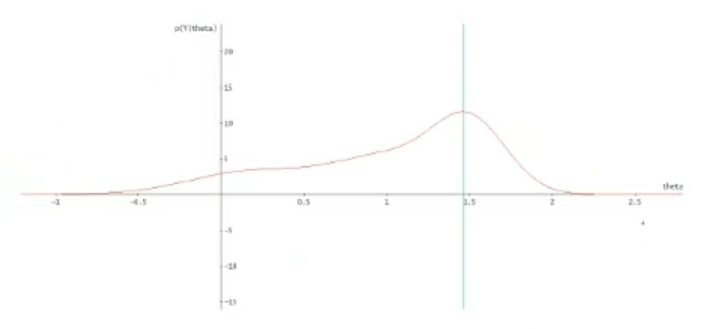

We call the Maximum Likelihood (ML) Estimate the one which maximizes .

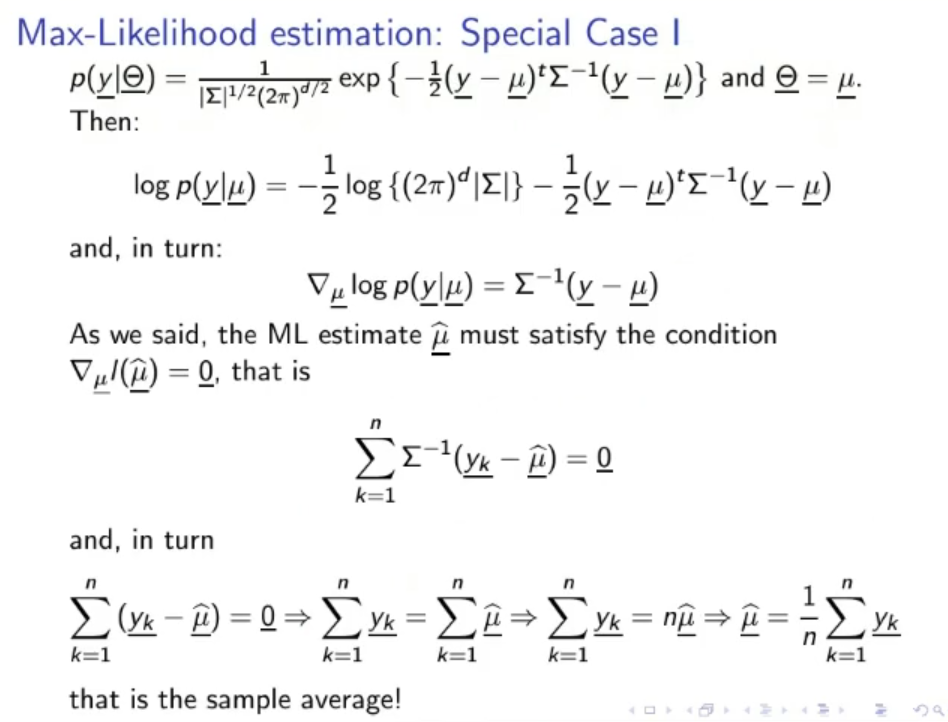

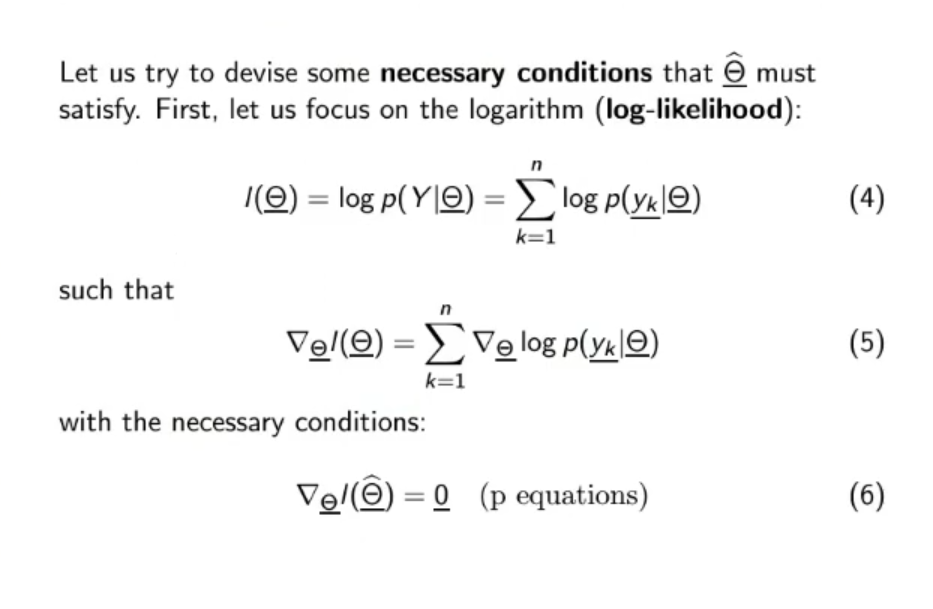

Log-Likelihood :

Where:

Where:

- is the gradient of the log-likelihood function with respect to

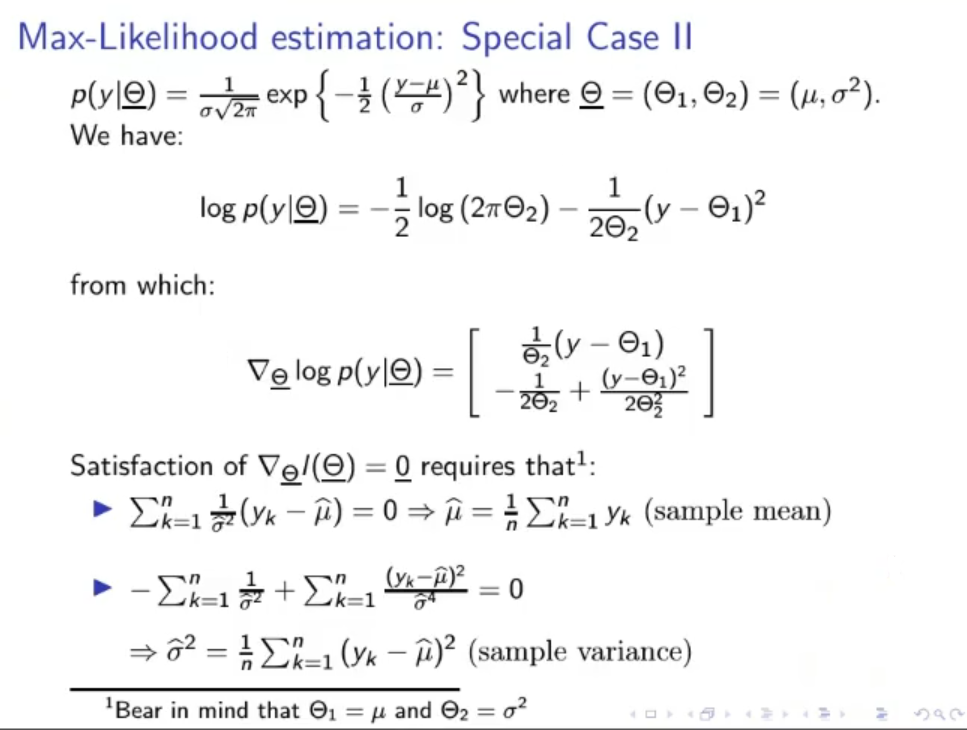

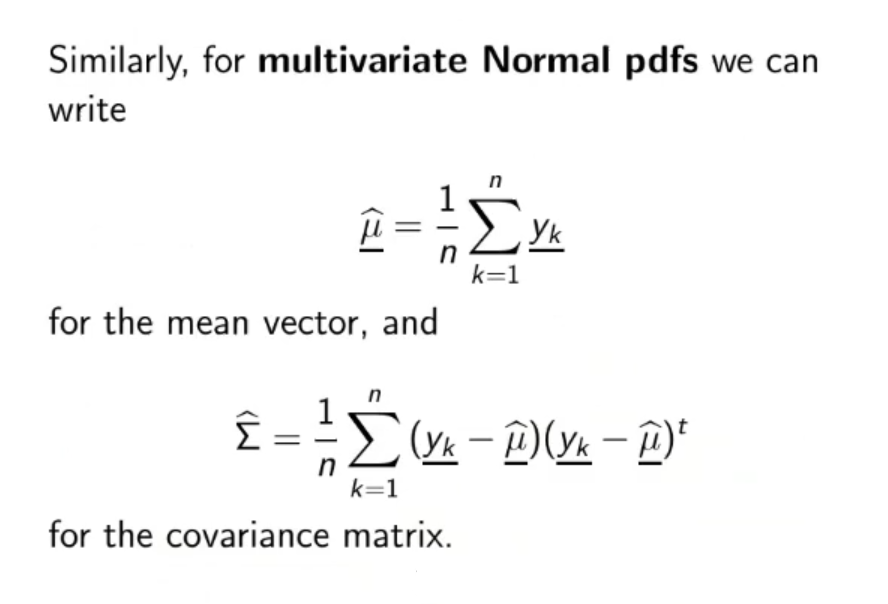

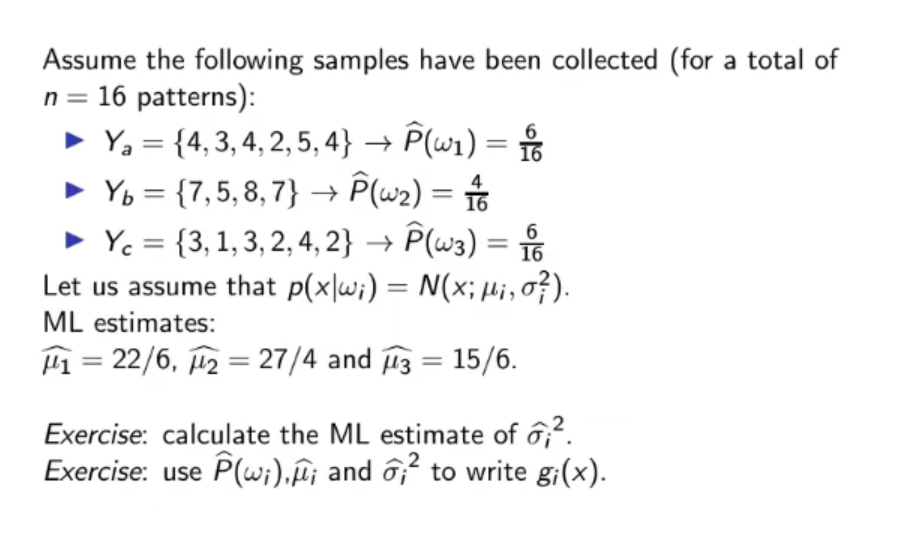

~Example : If we know that is a Gaussian Distribution the maximum likelihood of the mean and variance are respectively the sample-mean and biased sample variance.

Sample Mean : Biased Sample Variance :

(Bonus) Unbiased Sample Variance* :

Naming :

- : mean and vector mean

- : variance and covariance matrix

- : parameter vector, for example:

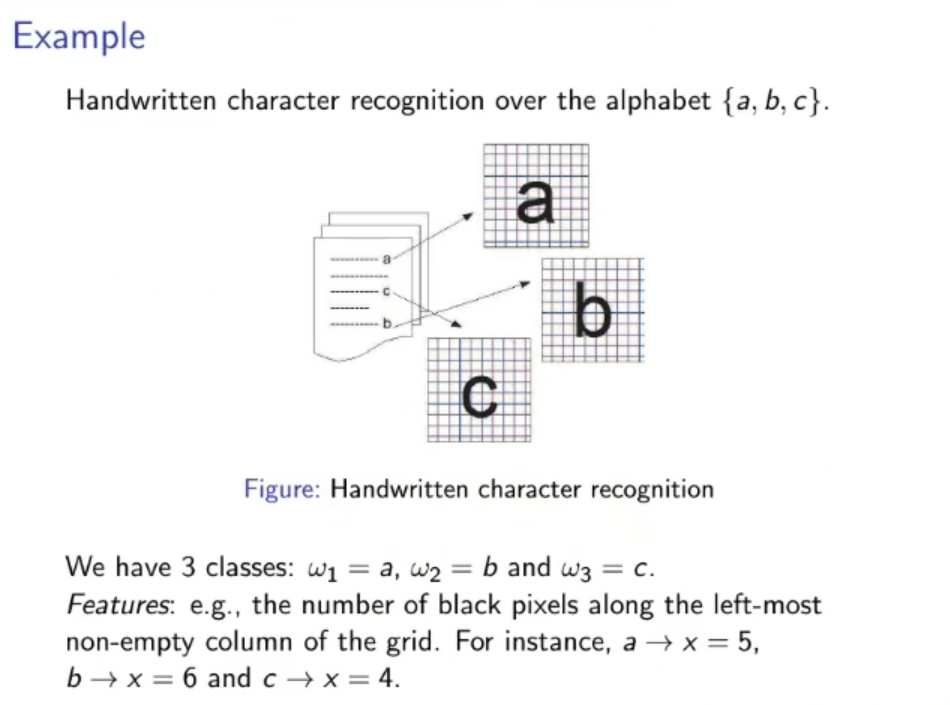

- : classes, for example the gender (male/female) we want to identificate.

- : weight

- : bias

- : data, could mean data in input or training data.

- : number of samples used as the training set

- : probability that given the data belongs to/is identified as the class .

- : discriminant function of class , usually it is defined as: (in the linear case)

- : decision rule, a simple decision rule could be:

NOTE: weights are named with , while classes with , it may cause confusion

Original Files:

Where:

- is the gradient of the log-likelihood function with respect to