Summary

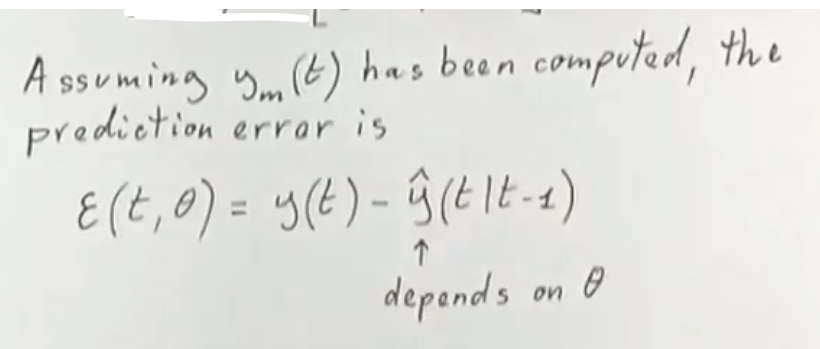

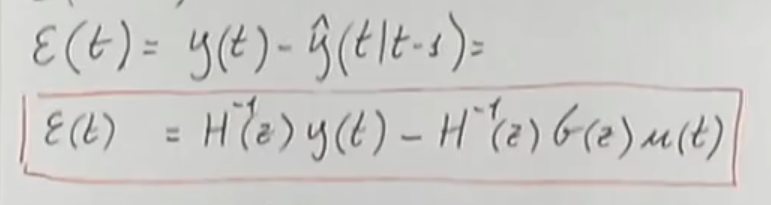

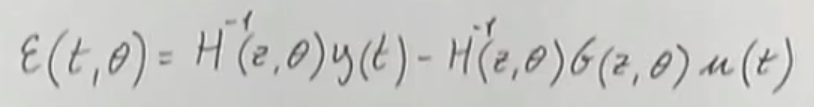

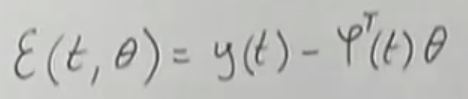

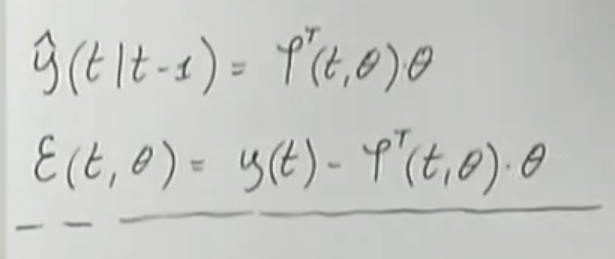

The prediction error at time depending on the parametric vector is equal to:

Can be written in 2 ways:

Where the 1° one is related to ARX models, where the regressor does not depend on the parametric vector . While the 2° one is related to ARMAX, OE, BJ models, and (which depends on ) is called pseudo-regressor.

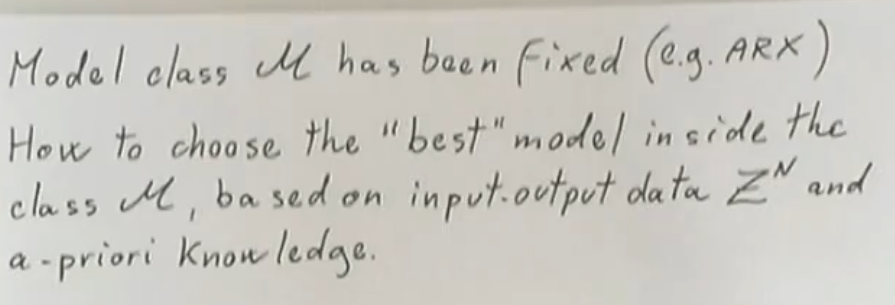

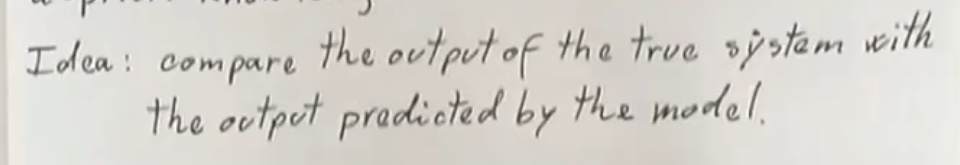

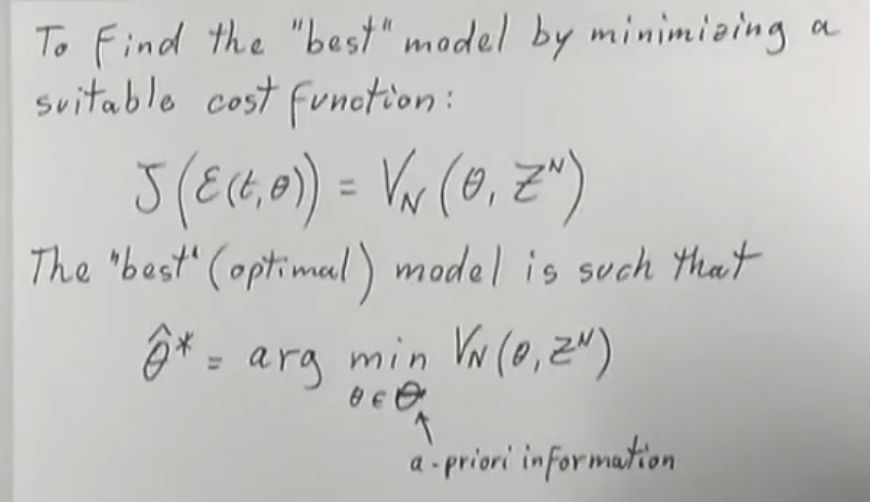

Choosing the Best Model

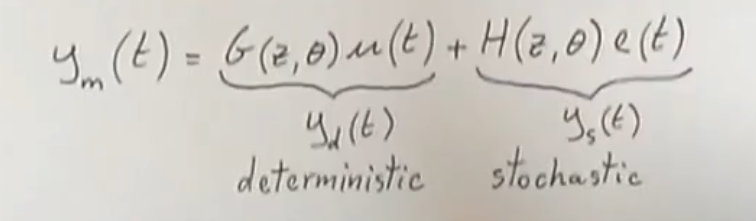

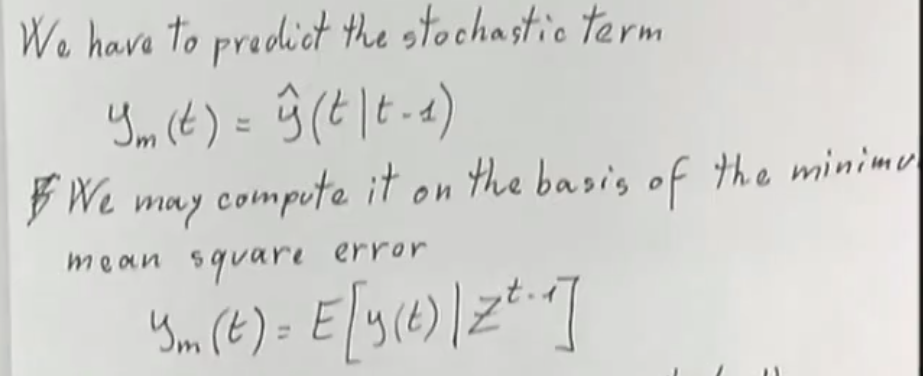

→ We have to predict the stochastic term.

→ We have to predict the stochastic term.

So to calculate the stochastic term we make use of the data set up to time , then to remove the stochastic term from we consider its mean.

So to calculate the stochastic term we make use of the data set up to time , then to remove the stochastic term from we consider its mean.

We may right the cost function which depends on as a function that depends on and after all, is calculate using those two arguments.

Of course, we define the optimal parameter vector as the one that minimizes the cost function or .

We may right the cost function which depends on as a function that depends on and after all, is calculate using those two arguments.

Of course, we define the optimal parameter vector as the one that minimizes the cost function or .

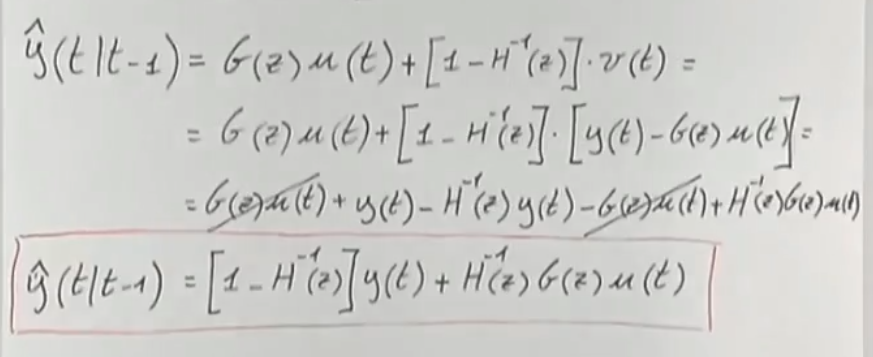

How can I predict the output ?

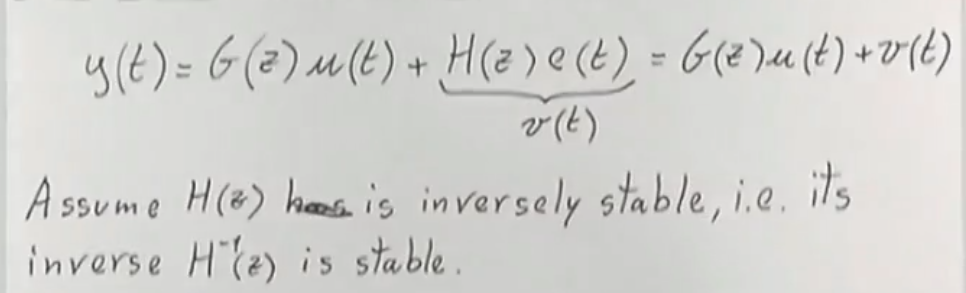

First we assume that is given by the following function:

You can thing of as the filtered noise, while is a generic stochastic preocess.

→ Inversely Stable: means that all zeros and poles of lie inside the unit circle.

→ Inversely Stable: means that all zeros and poles of lie inside the unit circle.

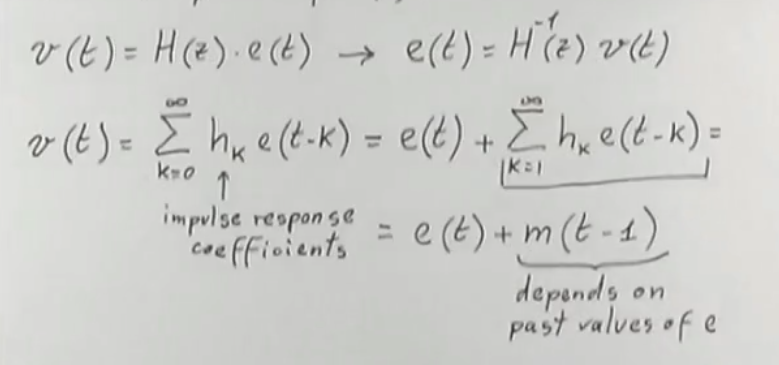

So:

Where you have to remember that is unpredictable

Where you have to remember that is unpredictable

Then, since e(t) is unpredictable our best bet is to predict as:

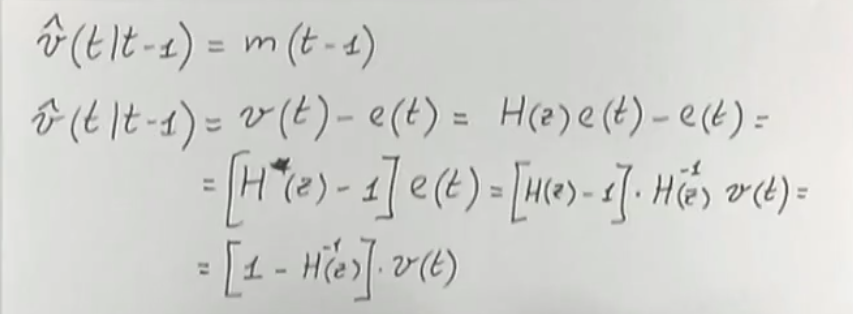

Now we can calculate the prediction :

- will not have constant terms, also it’s grade will be at least (or less , So will depend only on past term of

- The same also holds for

The error will be

That will depend both on and

→ We will choose such that we can minimize the previous cost function.

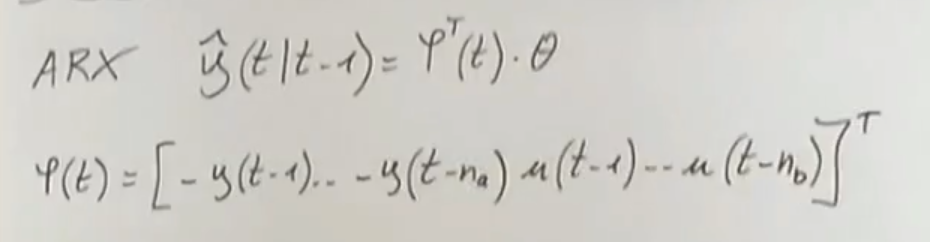

ARX Model (Error Function)

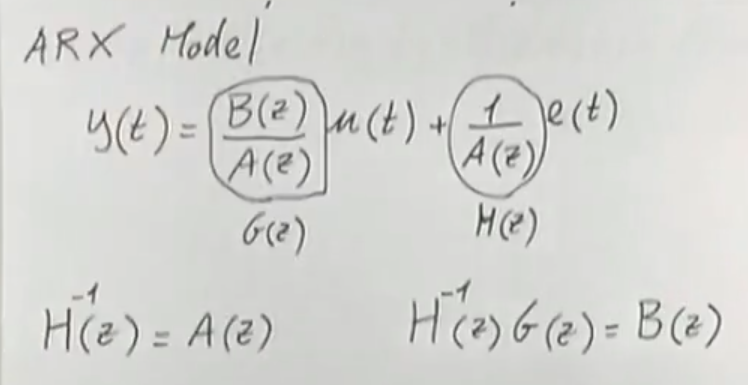

→TODO [ARX Model]

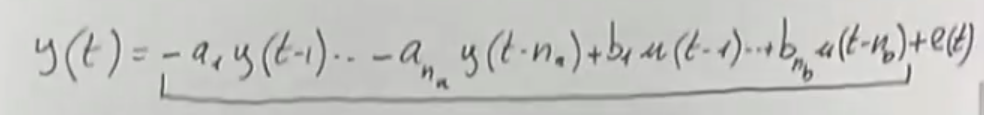

Remember that this is the structure of the ARX Model

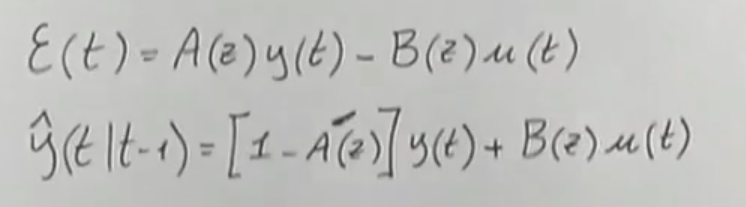

So we have that:

And is of the form: (already seen)

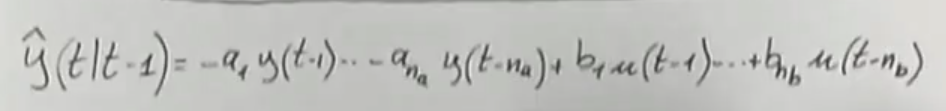

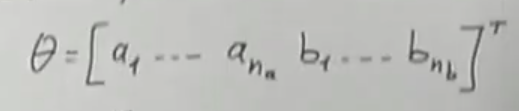

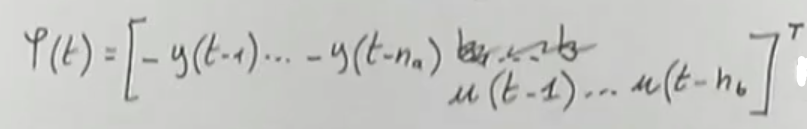

We can define the regressor vector as:

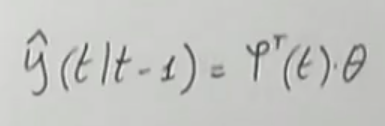

Such that:

→ Nothing changed from the explicit formula, it’s just much more compact

→ Nothing changed from the explicit formula, it’s just much more compact

NOTE: The error is linear in

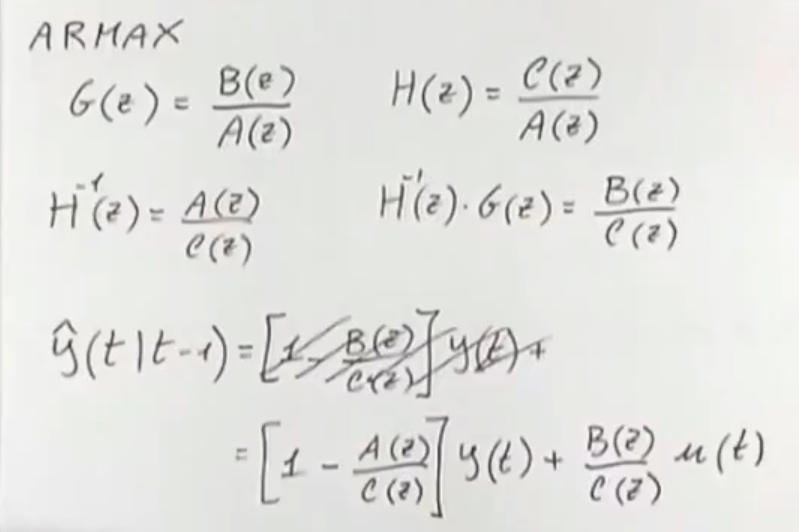

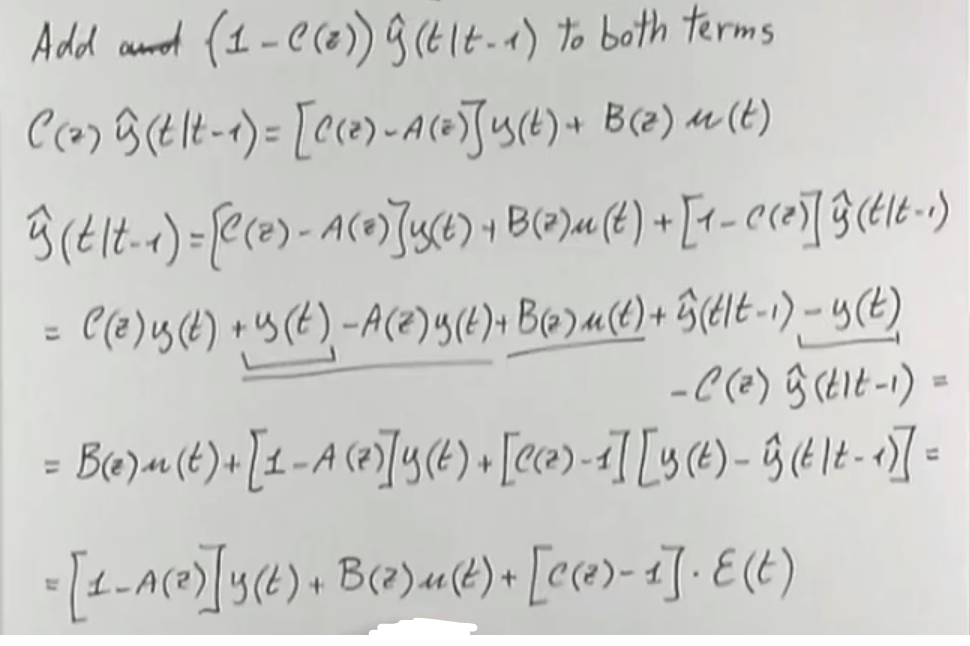

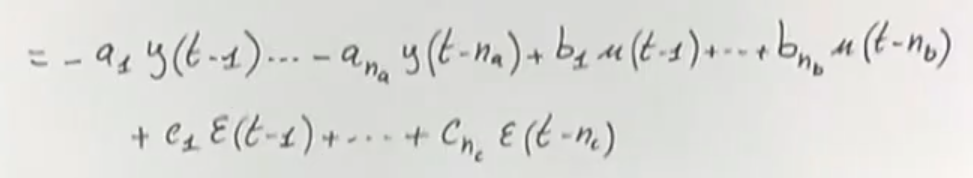

ARMAX Model (Error Function)

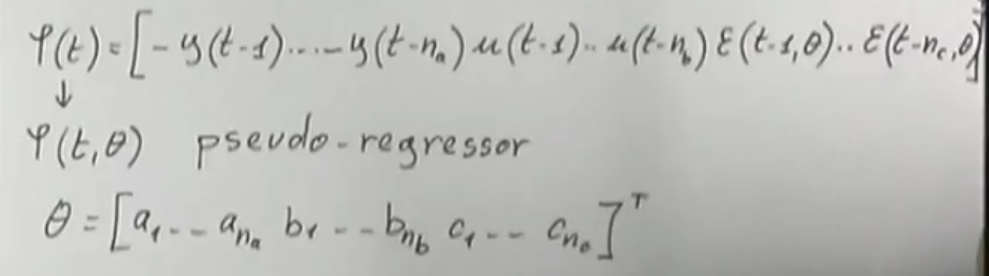

We can write the regressor as:

→ pseudo-regressor because it depends on

→ pseudo-regressor because it depends on

RESULT:

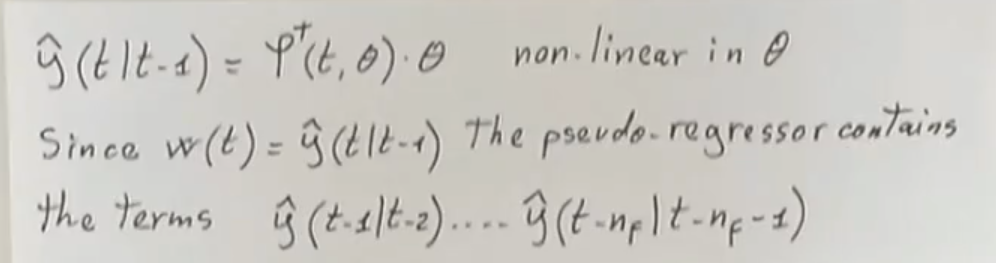

→ Differently fromTODO [ARX Model (Error Function)] it is non-linear in

→ Differently fromTODO [ARX Model (Error Function)] it is non-linear in

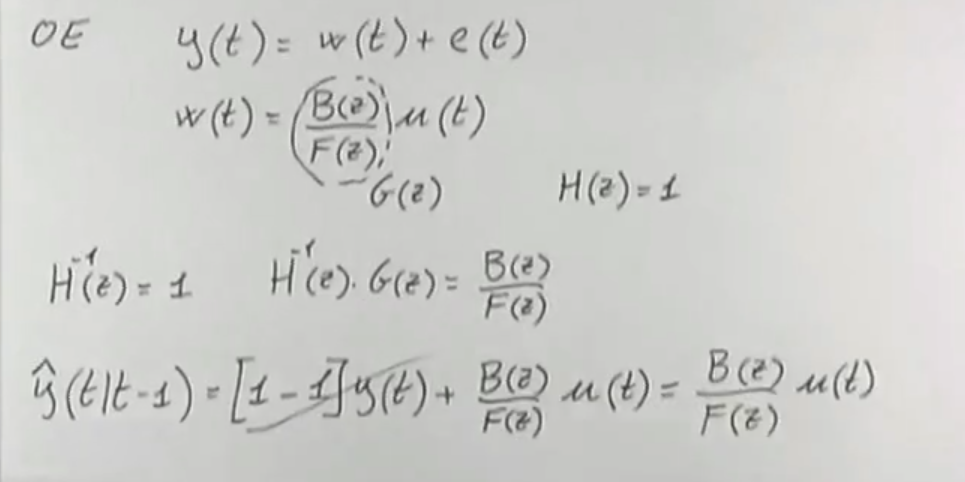

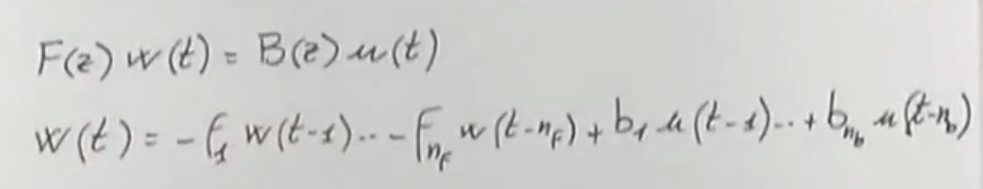

OE Model (Error Function)

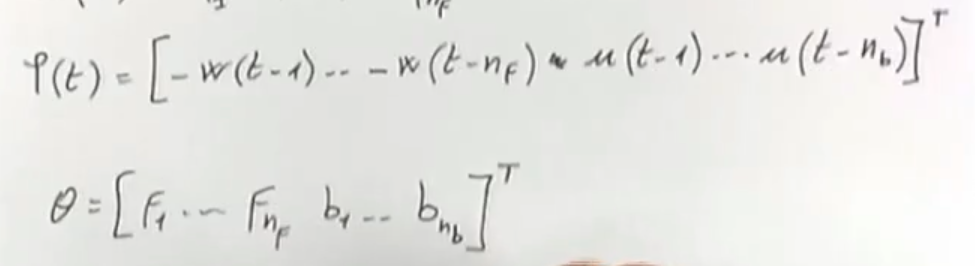

The pseudo-regressor is:

→ pseudo-regressor because depends on , and so does

→

→ pseudo-regressor because depends on , and so does

→

→ Like [ARMAX Model (Error Function)] the is non-linear in

→ Like [ARMAX Model (Error Function)] the is non-linear in

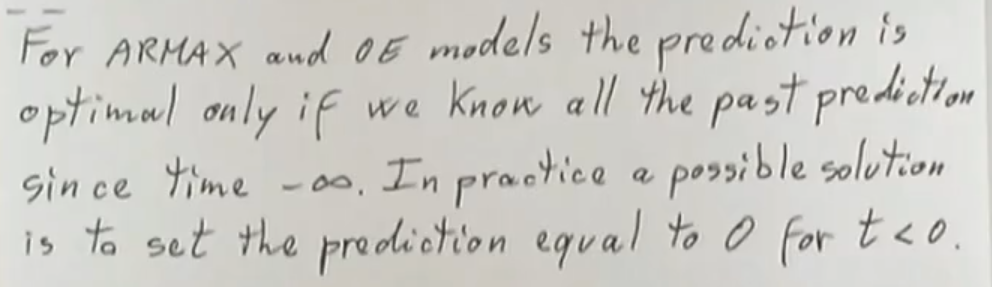

Paradox of the Optimal Prediction

- To know the prediction of we need ,

- But to know the prediction of we need ,

- And so on …

→ Both the input and output starting from time (or ) up to are known (i can measure them)

→ Both the input and output starting from time (or ) up to are known (i can measure them)

While for theTODO [OE Model (Error Function)] the pseudo-regressor depends on the past predictions which depend on all the past outputs (starting from ) so like for theTODO [ARMAX Model (Error Function)] the problem stands.