Markov Process:

For Markov process, we assume an independent stochastic process where the assumption of independence is partially relaxed:

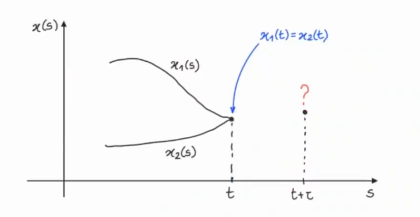

Given the current value of the process, the future values do not depend on the past.

Markov Property:

Notice the difference with the Independent Stochastic Process Assumption where the process is also independent from the current value.

NOTE: When writing it means to consider all the sample path up to time , how and when the states evolved. The equivalent in discrete time, (which is more clear to understand) is given by:

~Example:

If the processes are both Markov processes then the probabilities: