NOTE: The professor in the slides defined the result of a Relaxation as a LB (Lower Bound), this is true as long as the original problem is a minimization problem, the feasible solution space of a relaxation is bigger than that of the original problem, so there is more solution to choose from and in the minimization problem we can find a better solution which will be LOWER than the optimal solution of the original problem.

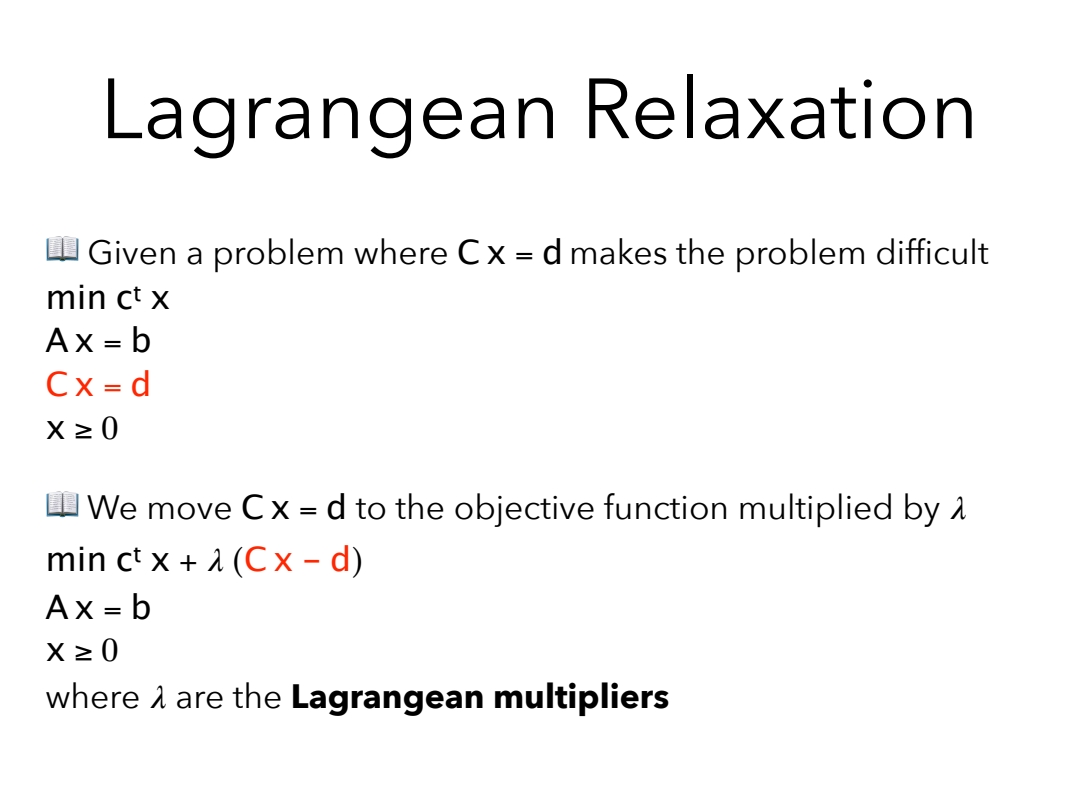

NO - Legrangean Relaxation

NOTE: The Lagrangian multipliers are cost that we add to the objective function to penalize each solution that does not respect the constraint , so it makes sense that we must have:

More Information:

Link to original

NO - Legrangean Problem

NOTE: It makes sense that being a penalizing cost , with , to find the best UB for our original problem we have to search for the , (if the original problem is a minimization problem, else the ). Wikipedia

NOTE: To understand why the problem yields the best bound think of not as a set weights previously decided but as another variable other then .

The optimal result to the Lagrangian relaxation problem will be no smaller than the optimal result to the original problem. Wikipedia

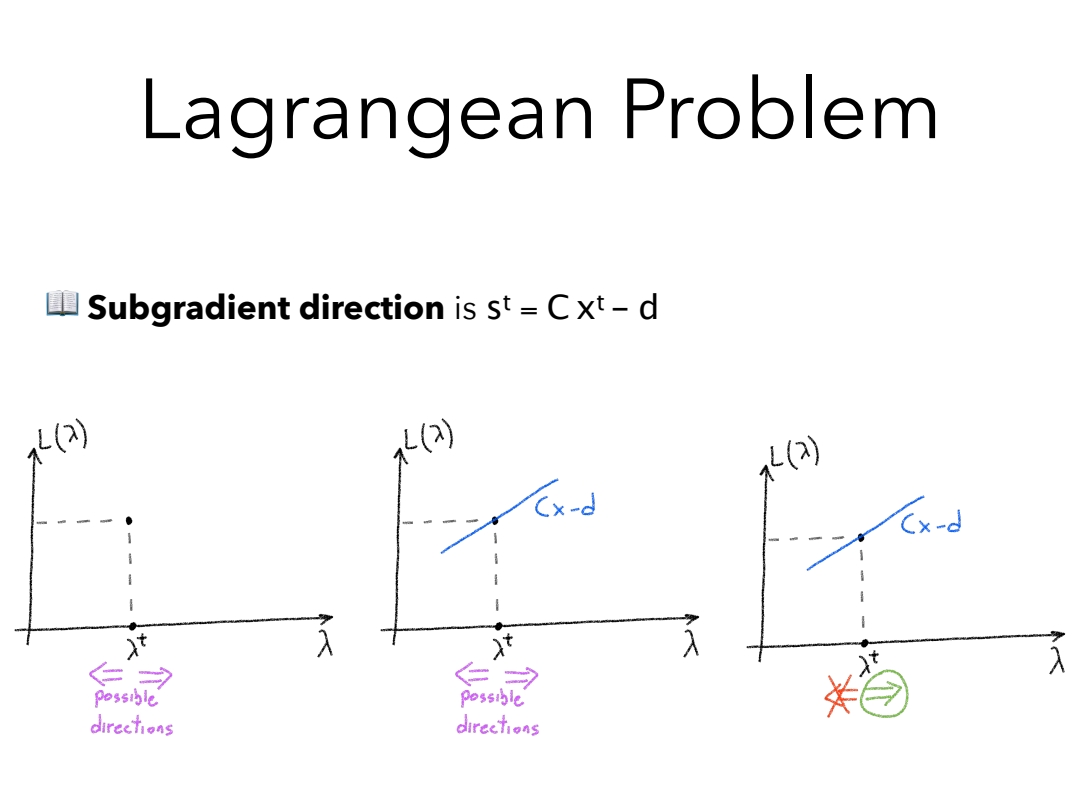

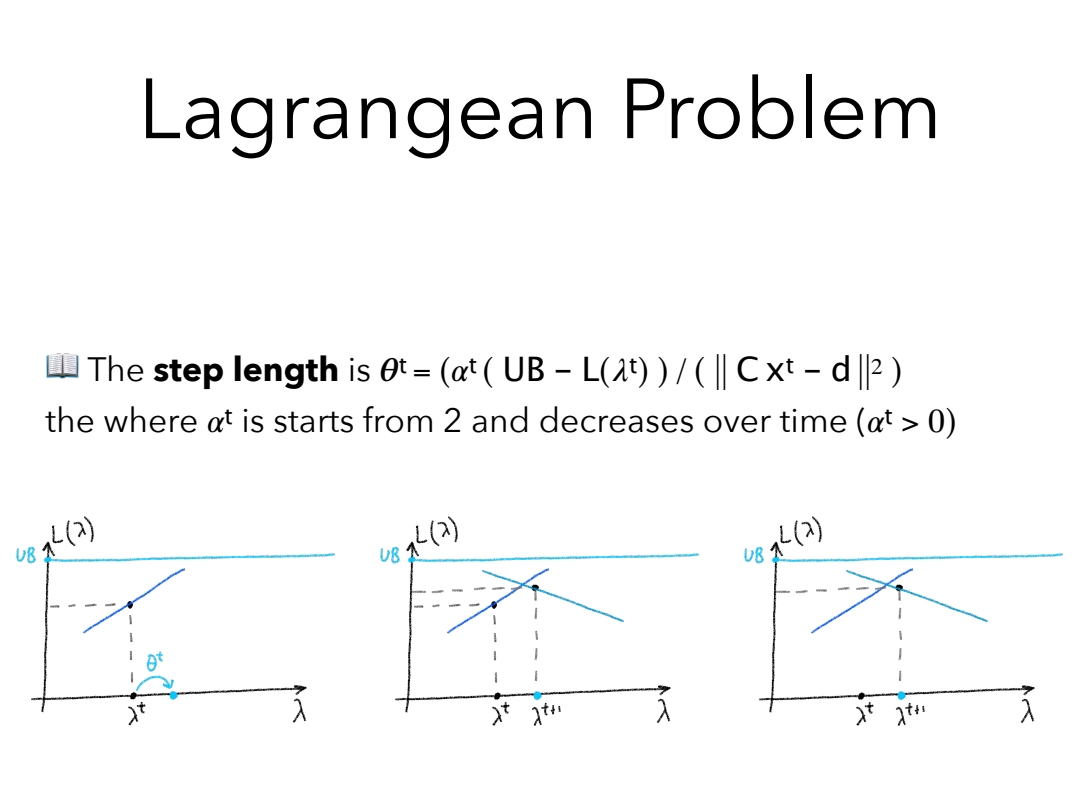

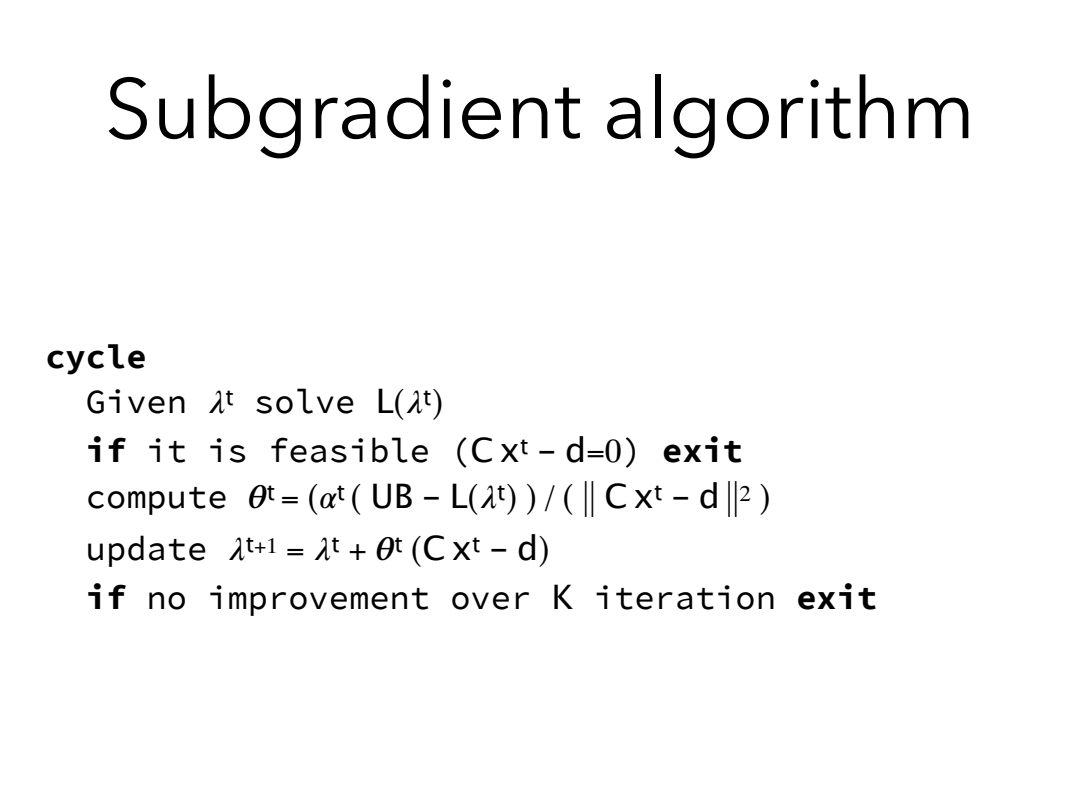

We can also solve the problem, with the sub-gradient optimization method, which result in an UB really tight which can be used as an approximate solution to the original problem.

A Lagrangian relaxation algorithm thus proceeds to explore the range of feasible values while seeking to minimize the result returned by the inner problem. Each value returned by the inner problem is a candidate upper bound to the problem, the smallest of which is kept as the best upper bound. If we additionally employ a heuristic, probably seeded by the values returned by the inner problem, to find feasible solutions to the original problem, then we can iterate until the best upper bound and the cost of the best feasible solution converge to a desired tolerance (sub-gradient optimization method). Wikipedia

Link to original