Terminology:

ANN: Artificial NN NN: Neural Network MNIST: Online database of handwritten characters (resolution usually of pixel)

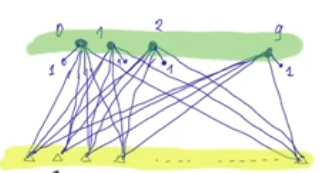

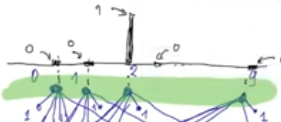

Structure of a NN

A simple NN that gets in input pixel from a MNIST Handwritten Character where each input assume values in where corresponds to complete white and to complete black.

If we use a simple NN with no hidden layer, and we know that the handwritten characters are only numbers from 0 to 9, we can have a NN with INPUT LAYER: 784 features + 1 bias OUTPUT LAYER: 10 classes

We have to find weights that solve this problem

Now suppose we use the sigmoid function as the activation function. The sigmoid function assume values , the output values of the NN (10 outputs) will be given by this function, so to choose the final output of the NN, we choose the highest value across all 10 outputs.

Loss Function of Sigmoid NN

From the NN given before we can define its Loss function or Error function as follows:

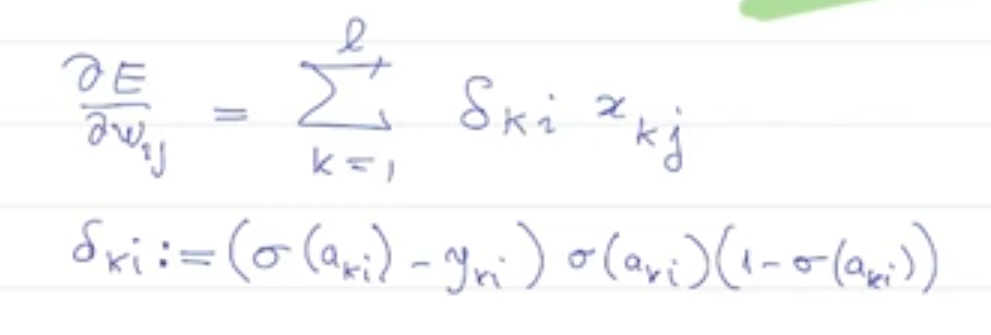

So we have that its partial derivative with respect to is:

And the partial derivatives with respect to is:

Together they form the nabla of E () that can be used to update the weight:

Sign Rule

and will always have the same sign.